A Machine Learning Model for Detecting Malware Outbreaks Using Only a Single Malware Sample

Download A Machine Learning Model for Detecting Malware Outbreaks Using Only a Single Malware Sample

Download A Machine Learning Model for Detecting Malware Outbreaks Using Only a Single Malware Sample

Machine learning (ML) has become an important part of the modern cybersecurity landscape, where massive amounts of threat data need to be gathered and processed to provide security solutions the ability to swiftly and accurately detect and analyze new and unique malware variants without requiring extensive resources. Some machine learning algorithms are typically trained on a large dataset. Malware outbreaks pose a challenge for machine learning in security since samples are scarce during the critical first hours.

In our research paper entitled “Generative Malware Outbreak Detection,” we demonstrated how machine learning technology for security solutions can identify a malware variant not only from large quantities of malware samples but also from only a small handful of observable variants. But how effective is machine learning if the only information available is from a single sample?

We answer this question in our collaborative study with researchers from the Federation University Australia entitled “One-Shot Malware Outbreak Detection Using Spatio-Temporal Isomorphic Dynamic Features.” We studied how machine learning, specifically generative adversarial autoencoder, performs dynamic malware detection given a case where only a single malware sample is available.

Similarities between malware variants of the same family

Today’s modern cyberattacks often involve an outbreak of malware that spans multiple organizations, industries, and regions — perhaps the most infamous example being 2017’s WannaCry attack, which was estimated to have affected 200,000 machines across 150 countries.

Furthermore, malware families are seeing constant evolution. Threat actors are not only adding capabilities to older malware variants but also creating new ones that are different enough for security researchers to find it difficult to easily identify them as well as correlate them to existing malware variants. Often, these new malware variants will only have a small number of samples with which security researchers will have to work.

Despite the complexity of modern malware families, the variants that comprise these families share a behaviourally similar pattern at their core. For example, ransomware will typically download an encryption key from a C&C server, after which files in target machines will be enumerated and encrypted. The final step will be the delivery of a ransom note demanding payment from the victim. This kind of phenomenon extends to other malware types, which will all invariably exhibit similar behaviour over time.

The method described in our paper uses the capabilities of the adversarial neural network in analyzing features extracted from API call events of malware samples in order to create accurate representations of malware variants while differentiating them from previously unseen benign samples.

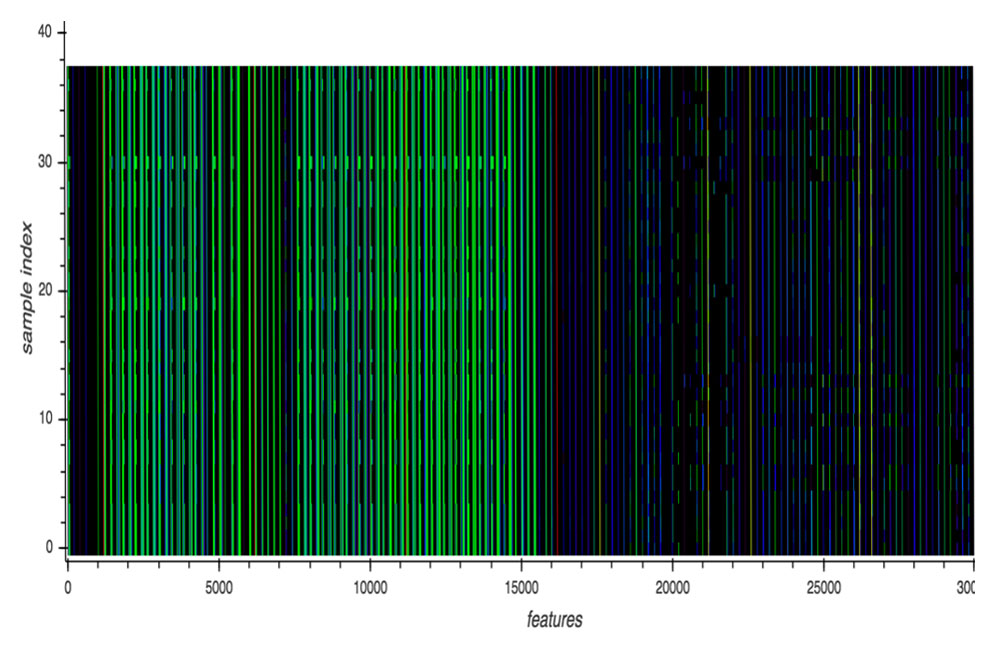

A malware sample’s behaviour can be seen in its dynamic execution log, which consists of a sequence of API call events made of an API identifier and its corresponding API arguments. This dynamic execution log serves as input to the deep learning model. The figure below illustrates the API call events of different variants of a malware family. Although each variant is different, overall, the visual representation of the API call events are very similar in terms of structure.

Visualization of the API call events of variants of a malware family

Note: Each row represents a per-sample feature, which is a sequence of API call events made by a malware sample. Each normalized API call event is rendered as a pixel with a unique color assigned via a lookup table. The X-axis is the feature while the Y-axis is the sample number. This cluster contains 38 samples (HO_WINPLYER.MSMIU18, 15 samples; OSX_Agent.PFL, 3 samples; OSX_Generic.PFL, 1 sample; OSX_SearchPage.PFM, 18 samples; OSX_WINPLYER.RSMSMIU18, 1 sample).

Comparison between machine learning models

To evaluate our proposed model, the generative adversarial autoencoder using mean squared error (MSE) as autoencoder loss (aae-mse), we compared it to other machine learning models, namely, Gradient Boosting, Support Vector Machine, and Random Forest. We collected the dynamic execution logs of 2,855 in-the-wild Mac OS X malware samples from a proprietary commercial sandbox along with 7,541 benign Mac OS X samples. The dynamic execution logs were analyzed and labeled by human experts, who focused on similarities in API call event sequences. We then identified 353 unique API call sequence patterns out of the 2,855 malicious and used those for training the model. The 7,541 benign samples were not included in the training of the proposed model but were instead split into training and testing sets for the baseline models.

For the evaluation, we simulated an outbreak by assigning a unique label to each of the 353 unique malicious training samples. Our goal was to gauge how accurately the machine learning model’s predictions matched the labels. What we found is that the baseline models were not effective for detecting outbreaks when trained with a very small sample size. On the other hand, aae-mse showed 99.1% true positives along with 0.1% false positives with MSE decision threshold, which influences how much the model generalizes the detection, set to 0.000025.

To learn more about our proposed model and study results, read our research paper entitled “One-Shot Malware Outbreak Detection Using Spatio-Temporal Isomorphic Dynamic Features,” which expounds on our proposed machine learning model for cybersecurity and the study results.It was presented at the 18th IEEE International Conference on Trust, Security and Privacy in Computing and Communications / 13th IEEE International Conference on Big Data Science and Engineering 2019 Conference and Exhibition, with an updated version soon to be available in the IEEE Xplore Digital Library.

Like it? Add this infographic to your site:

1. Click on the box below. 2. Press Ctrl+A to select all. 3. Press Ctrl+C to copy. 4. Paste the code into your page (Ctrl+V).

Image will appear the same size as you see above.

Recent Posts

- TrendAI™ and CleanDNS: From Blocking Attacker Infrastructure to Removing It From the Internet

- A Hidden Vulnerability in Healthcare: Exposed DICOM Servers and the Risk to Patient Data

- Update on Exposed MCP Servers: The Threat Widens to the Cloud

- From Stealers to Systems: The New Model of Credential Theft

- Edge Under Siege: How State-Sponsored Actors Exploit Your Perimeter

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report It’s By Design: The Use-After-Free of Azure Cloud

It’s By Design: The Use-After-Free of Azure Cloud Ransomware Spotlight: Agenda

Ransomware Spotlight: Agenda Guarding LLMs With a Layered Prompt Injection Representation

Guarding LLMs With a Layered Prompt Injection Representation