Cyber Threats

Dilemma of smart factory 3: Security risks

This series of blog posts explains the design security risks involved in legacy languages and risk mitigation measures that all users of industrial robots should take. This part clarify the essence of the problems hidden in task programs.

Industrial robots are the core of the automation of manufacturing processes in smart factories, and are the most important components as they support the manufacture of all kinds of products such as automobiles, aircraft, processed foods, and pharmaceuticals. In addition, as equipment that realizes unmanned manufacturing in the post-COVID-19 world where minimal or no contact is a necessity, the importance of industrial robots that can repeatedly execute specified movements with high accuracy is regaining attention. However, it is not commonly known that industrial robots are programmed using languages designed decades ago. Trend Micro has been conducting cybersecurity research on smart factories since 2017, and discovered vulnerabilities in "task programs" that define the behavior of industrial robots and also design flaws in "programming languages." These languages are legacy languages that were designed decades ago, but they continue to be used for purposes such as maintaining compatibility with successor models and reducing the burden of re-learning, and are a technology that is still being used in modern smart factories. In this series, based on the results of our third joint research project with the Polytechnic University of Milan, from a short to long-term perspective, we analyze the design security risks involved in legacy languages and risk mitigation measures that all users of industrial robots should take. In this third installment, we will clarify the essence of the problems hidden in task programs based on the analysis results of the programming languages of eight major vendors in the industrial robot industry.

Fundamental security risk: "Flat access" to "basic functions"

As explained in the previous article, it is possible to steal information and cause physical damage by exploiting the vulnerabilities of task programs of industrial robots. At first glance, the vulnerabilities discovered in this research appear to be "vulnerabilities resulting from poor coding" that tend to occur in web application development. If so, thorough secure coding is an effective countermeasure, but the problems hidden in the task programs of industrial robots are a little more complicated.

The task programs of industrial robots are written in "proprietary languages designed decades ago." From a security perspective, closed factory environments of 20 years ago and modern smart environments of technological innovation are totally different. Therefore, it is very possible that the security standards at the time of language development did not match those of today. Based on this hypothesis, for this survey Trend Micro analyzed a total of 100 task programs written in the programming languages of eight major vendors in the industrial robot industry in an attempt to determine the "security risks of language design itself." As a result, it was found that the programming languages of industrial robots have a technical security risk that "basic functions of systems (lower system resources) can be used unconditionally without any confirmation of permissions." The essence of the problem is that the functions required to drive the robot are implemented, but there are no mechanisms to prevent those functions from being used by malicious people.

The specifications of programming languages are highly platform-dependent. As mentioned earlier, an industrial robot is a complex cyber-physical system in which a program, a controller, and a driving part work in close coordination. Therefore, the problem is not only the programming language, but also a problem on the platform robot controller side. The language problem confirmed this time is caused by the fact that there is no mechanism for confirming permissions on the controller, which is the platform. It is thought that there is no mechanism for executing the permissions confirmation function on the programming language side either.

Now let's take a closer look at the problem.

Platform for which "flat access" is possible

Decades ago, when the programming languages for industrial robots were designed, the concept of smart factories did not exist, and closed factory environments were the norm. So it is not surprising that the design was not performed with active attackers from external locations in mind. In comparison, modernly designed systems such as smartphones reflect security designs that assume malicious attacks. For example, when developing an app for Android and programming it in Java, as described in the developer documentation, an explicit permission request is required to access resources outside the sandbox of the app itself. Therefore, even if a malicious developer sneaks an app containing malware onto the mobile app store, they must request permission to use the microphone, network, and other low-level system resources required by the malware to operate the app (Example: When the app is launched for the first time, the message "The app XX is requesting access to the microphone. Do you want to allow it?" is displayed).

Such permission settings are also necessary for industrial robots. However, at present, there is no such requirement for industrial automation platforms, and all resources have "flat" access. In other words, the task program can use lower-level resources beyond the scope of permissions that it should have.

What happens when "basic functions" are abused

The combination of the flat access mentioned above and the basic software functions of programming languages dramatically increase the security risks of industrial robots. Below, let's take a look at the basic functions of programming languages for industrial robots.

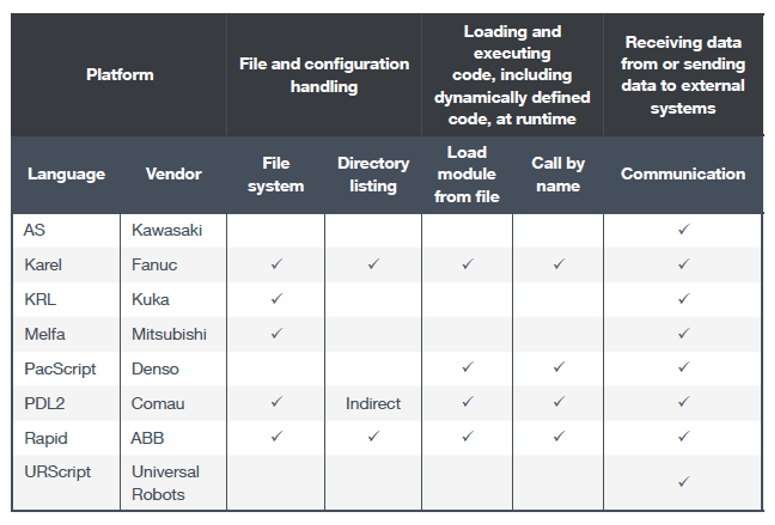

Industrial robot programming languages are used to define robot movements, but the software itself has more features. The proprietary programming languages of manufacturers investigated this time not only supported the concept of the file system found in ordinary computing systems, but also had functions such as function pointers and dynamic code reading. As shown in Figure 1, we can see that the software of industrial robot vendors provides sufficient functionality to act as a web server.

<Fig. 1> List of basic functions possessed by the task program of each company

As mentioned above, system resources can be used without any restrictions, allowing an attacker to exploit these basic functions. By taking full advantage of these basic features, it is even possible to write malware on a robot platform. Below, let's look at the true role of each feature and what happens if they are abused.

[File and directory access]

Programming languages for which the "File system" and "Directory listing" items are checked have basic functions for opening, reading, and writing to files and directories (access configuration parameters, write log information, and save program statuses).

Although these features are legitimate in nature, they can be exploited to leak information or load attack code.

[Reading and executing code]

Programming languages for which the "Load module from file" and "Call by name" items are checked have functions similar to function pointers in general programming languages. These features allow control process engineers to create modular programs by leveraging dynamic calling procedures. In addition, these features can change the flow of task programs at runtime, making them one of the most powerful and dangerous features.

These features are inherently very useful when implementing complex task processes which combine modular programs. On the other hand, these can be vulnerabilities which can be exploited to deploy dropper-type malware and execute remote code.

[Transmission and reception of data to and from external systems]

"Communication" is a function that has been confirmed to exist in all languages. This function facilitates cooperation between industrial robots and external systems. Examples of application include receiving real-time position coordinates from an external program, interlocking with a vision system, and sending feedback to an external system to be recorded as logs.

The problem here is that while the platforms have network communication capabilities, they lack any ability to authenticate and control access at the programming language level altogether. Developers and integrators can use authentication functions to set access restrictions when a robot controller is operated from an external location, but they cannot restrict access to network communication of task programs executed on the controller. Therefore, if a task program containing malicious code is written to a robot controller, the task program can perform external communication without any restrictions. Under this type of specifications, it is extremely difficult to create a task program that can perform external communication safely, so it can be said that there is a limit to how far security risks can be reduced based on the efforts of task program developers alone.

Security risks specific to proprietary languages

In addition to the above technical features, there are also risks due to the "uniqueness" of each robot language. For common programming languages (C, C++, C #, Java, PHP, Python, etc.), there are code checkers (e.g., static program analysis tools) that detect insecure patterns. On the other hand, one of the concerns is that it will be difficult to automate security checks because there are no such tools for each company's robot programming language.

Industrial robot programming languages are not based on a common runtime or architecture like mainstream operating systems, but on the platform (robot controller) of each manufacturer. For this reason, each robot vendor will also have to prepare their own proprietary programming language and the environment on which the program execution runtime will be based. There are programming languages and environments based on real-time operating systems (RTOS), but generally they are not standardized. In addition, each language has its own semantics (criteria for judging whether variables and statements used in the source code work correctly), and these may be very different from general-purpose programming languages. In fact, some of the languages we surveyed do not have features like string manipulation or cryptographic manipulation.

Also, these types of characteristics tend to limit the choices available to users. Since the programming environment of industrial robots cannot be easily replaced, it is not easy or practical for users to correct these design flaws. In addition, the languages of each company are not only central to current industrial automation, but also have strong technical lock-ins, which inevitably increases the cost of transferring to other platforms. Therefore, it is very likely that users will have to choose to accept the security risks of the robot platform they use.

There are cyber security risks that continue to lie in the core technologies that support manufacturing. This is the modern dilemma of smart factories.

So far, we have analyzed the security risks of the platform technologies that support the automation of industrial robots. In the next and final installment, we will propose security mitigation measures that industrial robot vendors, integrators, and users should implement from a short- to long-term perspective.