By Vladimir Kropotov and Fyodor Yarochkin (Principal Researchers, Forward-Looking Threat Research Team, TrendAI™ Research); Kirill Gelfand (Strategic Solutions Architect, TrendAI™)

Key takeaways:

- AI skills, which are executable knowledge artifacts that combine human-readable instructions with LLM-interpretable logic, represent a critical emerging attack surface. Organizations increasingly encode operational expertise, decision-making criteria, and automation workflows into skills consumed by AI agents, creating high-value intelligence targets that reveal critical organizational processes.

- Organizations are adopting AI automation and AI skills to address human resource shortages especially in high-pressure environments such as security operations centers (SOCs) and managed security service providers (MSSPs). AI adoption allows these organizations to significantly improve performance and address the growing cost of security talent. However, this adoption comes with new associated risks that must be addressed.

- SOCs face acute risk from AI skills compromise. Attackers who gain access to SOC skills can learn alert triage logic, correlation rules, incident response procedures, and reporting metrics, enabling them to evade detection, manipulate severity classifications, and disable automated defensive responses.

- AI skills are being adopted across critical sectors, each facing sector-specific security risks. Financial services face strategy theft and threshold manipulation. Healthcare skills can affect clinical protocols, research and patient data. Industrial sectors might be vulnerable to sabotage and R&D theft. Public sector skills enable potential manipulation of data used for strategic decisions. Technology and media sectors face data exfiltration and reputational damage risks.

- These threats and risks are blind spots for most traditional security tools. Traditional security tools are tailored to monitor for attack signatures and patterns, and therefore are simply not prepared to detect malicious content in unstructured text data. It is necessary for security tools to understand the semantics of AI skill content to be capable of detecting and addressing associated risks.

AI adoption accelerated rapidly in 2025, yet for most organizations, meaningful and valuable integration remains a work in progress. Scaling AI comes with its obstacles, including rigid system architectures, limited domain-specific functionality, and difficulties in customizing solutions, to name a few.

AI skills have emerged as an answer to these constraints, allowing organizations to turn experimental AI capabilities into executable and scalable operations. In this article, we explore this emerging facet of AI, examining not only its potential but also its possible drawbacks, especially from a security perspective.

What is an AI skill?

An AI skill is an artifact that represents a hybrid knowledge format, combining human-readable text with instructions that large language models (LLMs) can interpret and execute. AI skills encapsulate everything, from elements like human expertise, workflows, and operational constraints, to decision logic. By capturing this knowledge into something executable, AI skills enable organizations to achieve scalability and knowledge transfer at previously unattainable levels.

Anthropic, the developer of the Claude family of LLMs, formally refers to AI skills as Agent Skills and defines them as “organized folders of instructions, scripts, and resources that agents can discover and load dynamically to perform better at specific tasks.” Aside from Anthropic’s Agent Skills, examples of skills provided by well-known AI platforms include GPT Actions by OpenAI and Copilot Plugins by Microsoft.

Figure 1. An example of a Claude skill for handling weekly updates automation in Atlassian Confluence group meeting minutes

An emerging blind spot

The wide adoption of AI skills also bears significant impact on the security posture and defense capabilities of many organizations, especially in cases of “shadow AI,” where AI tools are used within an organization without formal approval or oversight. For example, wider adoption of AI automation, as with any automation, could make organizational automated responses more predictable to attackers. By gaining access to an organization’s AI automation corpus, attackers can recreate it in a sandbox environment and use this environment to test their detection evasion techniques before using them on a targeted organization.

Many of today’s security defense tools lack the capabilities to effectively detect, analyze, and mitigate threats from unstructured text data, which AI skills are. The recent popularity of OpenClaw (aka ClawBot or MoltBot) and the security issues that brought it to wide adoption constitute a good example of the associated risks and blind spots. To address such issues, AI security solutions must be capable of comprehending the semantical content of the AI skill corpus.

What is the impact of AI skills on critical environments?

AI skills represent a significant boost in how organizations can leverage AI across different critical sectors. In this section, we discuss the advantages they offer in different sectors and examine the implications of domain-specific skills publicly available today.

Knowledge preservation and digital twins creation

Modern AI environments enable preservation of institutional knowledge in executable and operationally useful forms. AI skills further amplify this ability. Because skills can capture expertise, skills can be combined to create virtual personalities, which in turn can be synthesized into digital twins that simulate the behavior, tasks, and approaches of human specialists.

This capability provides substantial advantages, including:

- Accelerated knowledge transfer for critical business processes.

- Streamlined onboarding of new team members.

- Minimized impact of expertise loss when personnel leave an organization.

- Scalable automation of previously manual or expert-driven workflows.

AI skills adoption in critical industries

As we’ve established, skills add to the AI functionality of knowledge preservation and transfer, which, if honed for specific critical industries, can significantly simplify automatization for more focused business processes.

Over the coming months, we anticipate the development and acceleration of skills for key critical verticals. Today, we already observe a variety of skills for the following sectors:

- Financial services: automation for market analysis, investments and trading

- Technology, media, and communication: skills that leverage content generation (e.g., web assets and media), optimization, and analysis

- Industrial, energy, and manufacturing: skills for computational materials science and numerical simulation workflows

- Healthcare and life sciences: synthetic healthcare data generation

- Public sector: a variety of options for skills that allow for faster, more accurate service delivery and improved customer experience (e.g., providing assistance in filling out and verifying documents and forms, calculating statistics and metrics, and answering standard questions)

Public exposure of AI skills

When we examine these domain-specific AI skills more closely, potential drawbacks become more apparent.

The threat of unintended exposure is not theoretical.

Public GitHub repositories already host domain-specific skills that expose sensitive operational parameters. For example, the “Awesome Claude Skills” collection catalogs hundreds of community-built skills spanning multiple industries, including:

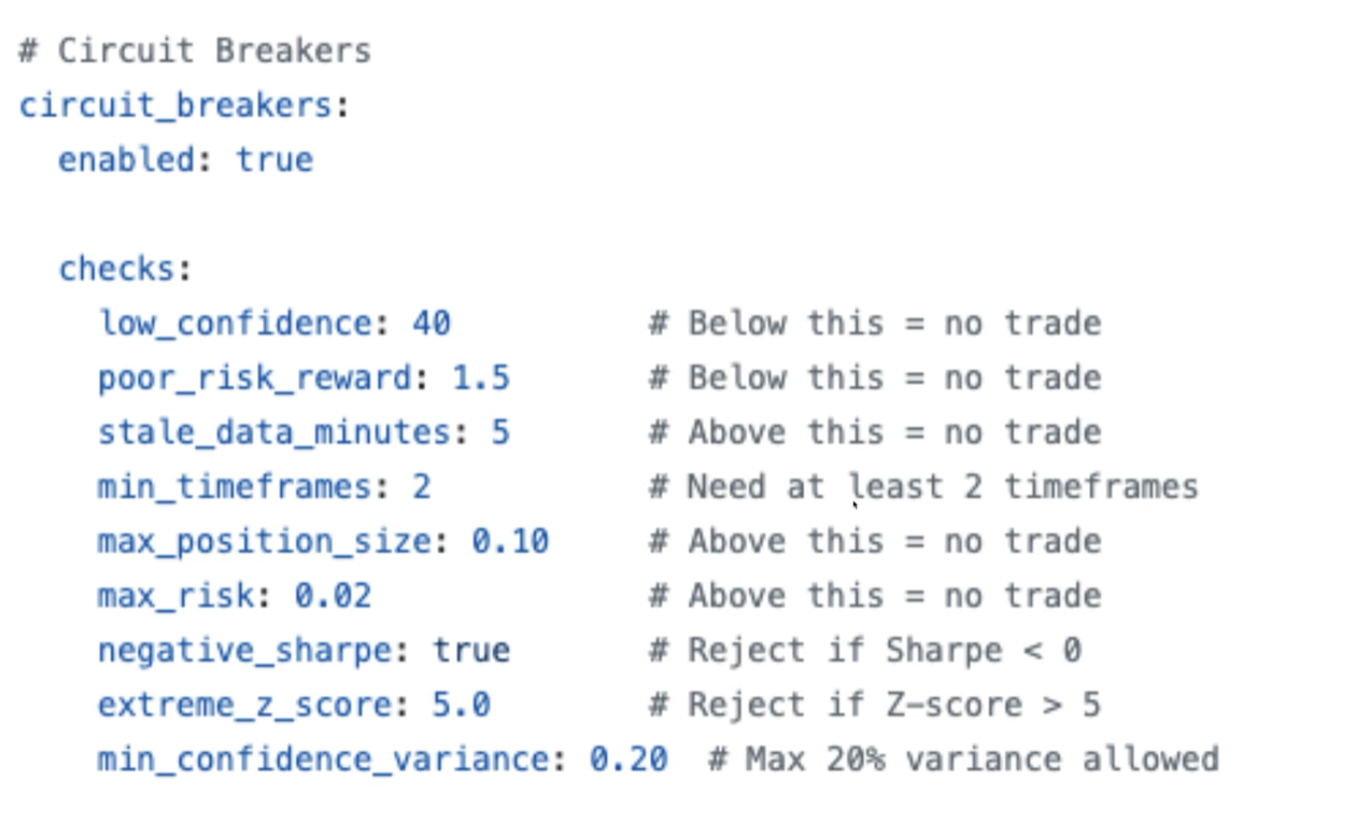

- Financial trading skills containing hard-coded risk thresholds, position limits, and circuit-breaker configurations.

- Healthcare simulation skills encoding clinical decision logic.

- Materials science skills containing proprietary and simulation parameters.

- Marketing automation skills with campaign strategies and audience-targeting logic.

- Security operations skills documenting detection methodologies and response playbooks.

For example, as seen in Figure 2, a cryptocurrency trading skill publicly exposes circuit breaker thresholds, allowing attackers to craft trades specifically designed to evade automated risk controls.

Figure 2. A screenshot of a cryptocurrency trading skill that publicly exposes circuit breaker thresholds

Similar exposure patterns exist across other verticals where skills encode operational decision boundaries.

In short, AI skills adoption spans multiple critical sectors, but each sector presents unique security implications, as summarized in Table 1.

| Vertical | Skill examples | Compromise risks |

|---|---|---|

| Financial services |

|

|

| Healthcare |

|

|

| Industrial/Energy/Manufacturing |

|

|

| Public sector |

|

|

| Technology/Media/Communications |

|

|

Table 1. AI skills adoption and its security implications across critical sectors

What threat model and attack vectors do AI skills introduce?

The emerging exposure patterns highlight the need to examine the broader threat model and attack vectors introduced by AI skills.

AI skills as an unconventional attack surface

AI skills represent an underestimated attack surface.

In essence, LLM skills are a form of knowledge preservation. This capability can produce both highly beneficial and potentially harmful outcomes.

Among the positives:

- Domain-knowledge analysts can distill their knowledge into shareable “skills,” enabling others (possibly less-experienced engineers in that domain) to reuse these capabilities in their own workflows. This makes the knowledge more widely available and accessible across the organization.

- Skills can automate processes, such as in security monitoring, where AI-enabled security operations centers (SOCs) automate monitoring and initial triage.

However, these same advantages introduce new risks. AI skills expand an organization’s attack surface. Attackers will choose to seek out vulnerabilities not only in the underlying components that AI skills depend on but also in the skill logic itself.

Moreover, an AI skill is itself a form of proprietary data. Skills might contain sensitive operational information, such as thresholds and triggers an organization uses in its processes or an organization’s handling procedures for sensitive data. Risks become apparent in all major critical industries. Knowledge about thresholds, for example, can be leveraged to manipulate the severity of notifications or exploit decision-making logic.

If an attacker gains access to the logic behind a skill, it can give them substantial opportunity for exploitation. An attacker might also simply decide to trade or leak acquired data, thus exposing sensitive organizational information. The risks for these attack scenarios are particularly acute for AI-enabled SOCs.

SOC-specific implications and injection attack vectors

SOCs face unique risks when integrating AI skills into their processes. Attackers can analyze scenarios within an AI skill and attempt to exploit its execution logic. The core challenge lies in the fact that skills inherently introduce a heightened risk for injection-based attack vectors.

AI skills mix user-supplied data with user-supplied instructions, and skill definitions might also mix both data and instructions and can reference external data sources. This combination of data and executable logic creates an ambiguity, which in turn makes it difficult for defense tools — and even the AI engine itself — to safely differentiate between genuine analyst instructions and attacker-supplied content. Hence, the inability to defend against injection attacks.

As a result, AI skills become susceptible to AI-native injection attacks mirroring classic exploitation attacks, like SQL injection, but in the context of an AI engine. AI skills inadvertently create conditions for AI variants of traditional injection attacks, where malicious content can manipulate LLM execution logic.

Escalation path: From tactical to strategic

Unauthorized access to AI skills leads to a dangerous escalation path.

When collected systematically by a malicious attacker, AI skills can reveal critical organizational business processes that reveal sensitive insights into how an enterprise operates, makes decisions, and defends itself.

The more AI skills attackers compromise, the more advantages they gain:

- One or two skills provide tactical advantage (e.g., identifying specific detection blind spots)

- Over 20 skills enable strategic modeling (e.g., provide a complete understanding of SOC priorities)

- A complete skill set enables full behavioral prediction and digital twin creation (e.g., construction of digital twins of security analysts)

The cumulative impact of such breach grows exponentially.

Once AI skills have been compromised, attackers retain long-term knowledge into an organization’s security posture, which is unlikely to change much over time. Preventing unauthorized access to AI skills is therefore essential to eliminate this entire escalation path.

Threats have evolved as predicted

The risks we outlined here directly align with earlier industry predictions. TrendAI™’s security predictions for 2025 warned of “malicious digital twins,” scenarios where breached personal information trains LLMs to mimic employee knowledge, personality, and writing style. AI skills now provide the exact building blocks for such attacks.

This urgency was further validated in November 2025, when Anthropic disclosed the first documented large-scale AI-orchestrated cyberespionage campaign, which executed 80 to 90% of operations autonomously.

How should organizations govern and protect AI skills?

It’s clear that organizations must proactively prevent skill-related risks as they embed skills into their processes. In this section, we outline measures to safeguard the use of AI skills and ensure that the benefits of adoption do not come at the cost of security.

Kill chain model for AI skill compromise

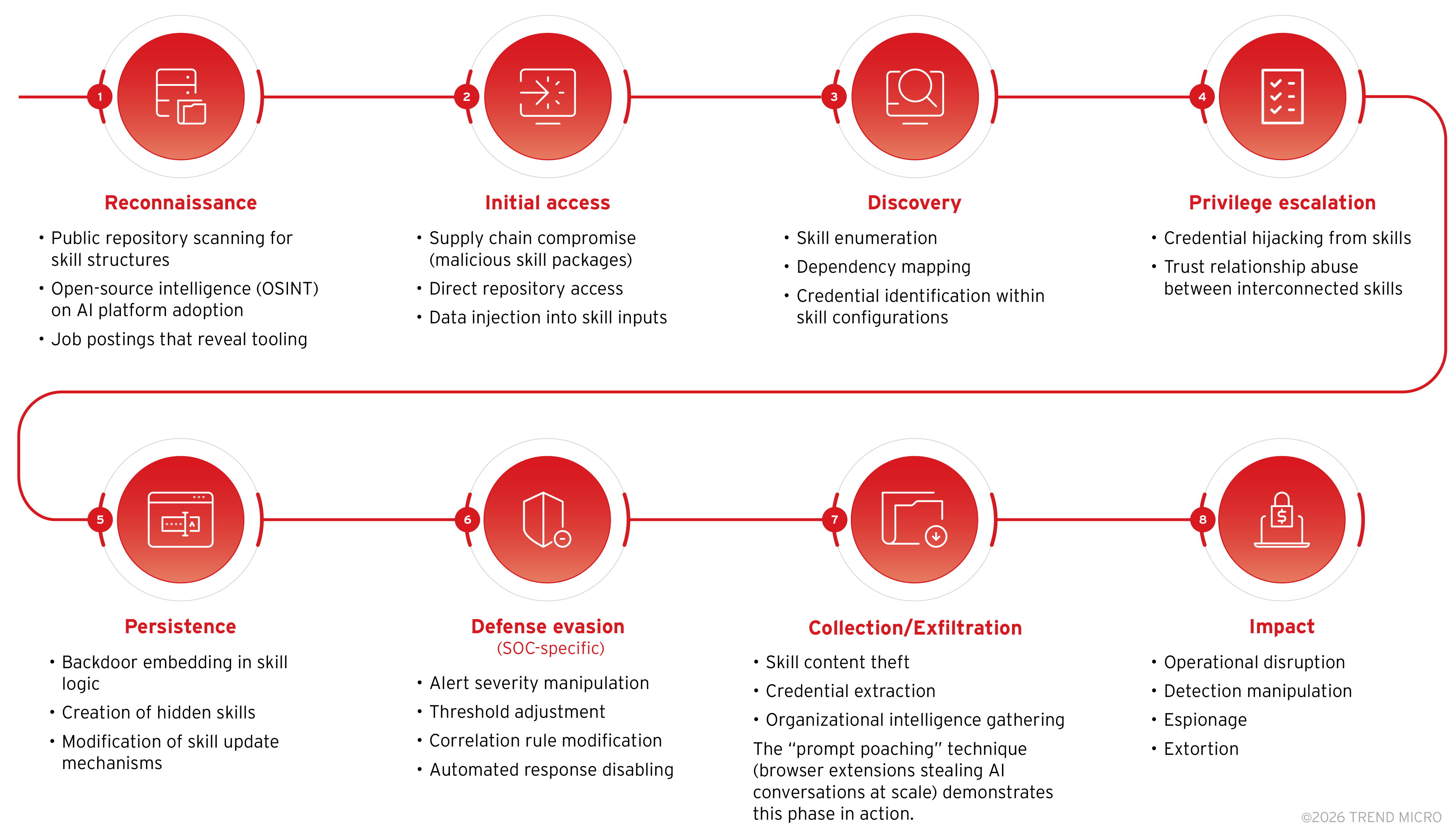

Traditional kill chains inadequately address AI skill–based attacks. Traditional kill chain models, designed for threats like network intrusion, malware, or credential compromise, do not fully encompass the unique characteristics of AI skill–based attacks.

To address this gap, we propose an eight-phase kill chain model, illustrated in Figure 3, which is specifically tailored to AI skill compromise by reflecting the full life cycle of how adversaries can discover, exploit, and leverage skills to achieve both tactical and strategic objectives.

Figure 3. Eight-phase kill chain model tailored to AI skill compromise

Detection and response framework

To complement the eight-phase kill chain, we also outline key indicators and mitigations of AI skill compromise. Our detection architecture and response framework are designed to guide organizations in detecting suspicious activity early and responding quickly to emerging threat scenarios.

Detection opportunities

Organizations should monitor for multiple complementary signals, each indicative of a different stage or technique in the compromise life cycle, as outlined in Table 2. Together, these signals form a comprehensive early-warning system for AI-driven threats.

| Detection category | Indicators |

|---|---|

| Skill integrity monitoring |

|

| Execution anomalies |

|

| Credential access patterns |

|

| Data flow anomalies |

|

| SOC logic manipulation |

|

Table 2. Indicators for detecting AI skill compromise

Recommended detection architecture

We propose the multilayered detection architecture outlined in Table 3, which is aligned with the our eight-phase kill chain.

| Layer | Data source | Detection focus |

|---|---|---|

| Repository |

|

|

| Platform |

|

|

| Network |

|

|

| Credentials |

|

|

| SOC output |

|

|

Table 3. Multilayered detection architecture aligned with the eight-phase kill chain

Phase-aligned mitigations

To further operationalize the guidance in this model, we map concrete mitigation strategies directly to each phase of our proposed kill chain, as outlined in Table 4.

| Phase | Primary control | Detection opportunity |

|---|---|---|

| Reconnaissance |

|

|

| Initial access |

|

|

| Discovery/Escalation |

|

|

| Persistence |

|

|

| Defense evasion |

|

|

| Exfiltration/Impact |

|

|

Table 4. Mitigations mapped to the eight-phase kill chain

Security principles for safeguarding AI skills

Beyond the technical mitigations we have outlined, organizations should revisit established security best practices and apply them to the integration and management of AI skills.

Here are tested principles to apply throughout the AI skill life cycle:

- Treat skills as sensitive intellectual property. Apply risk assessment and mitigation procedures throughout the entire life cycle. Implement proper access control, versioning, and change management. Enable appropriate access controls through proper classification and labeling.

- Separate instructions from untrusted data. Many skills operate on user-supplied data, creating exploitation opportunities. Maintain clear separation between skill logic, skill data, and untrusted external data.

- Constrain execution privileges. Apply least-privilege principles when designing skills. Limit execution context to minimum required permissions to prevent lateral movement during compromise.

- Validate against adversarial scenarios. Understand the organization’s attack surface. Test how malicious users might exploit skill operational logic before deployment.

- Implement comprehensive monitoring. Monitoring, logging, and auditing are critical for the secure operation of any business process, especially AI-enabled environments where traditional security boundaries blur.

Conclusion

AI skills represent an emerging attack surface requiring dedicated security attention, especially in environments where shadow AI introduces unmanaged or inconsistently governed knowledge artifacts. As organizations encode more operational expertise into executable knowledge artifacts, the value of these assets to attackers grows proportionally.

For SOCs specifically, an AI skill compromise threatens the foundations of defensive operations. Attackers who understand SOC AI skills can learn how to suppress alerts, evade correlation rules, disable automated responses, and manipulate reporting, effectively blinding defenders.

As a thought leader, TrendAI™ continues to research AI security threats and develop detection capabilities and mitigation strategies for AI-enabled environments. Organizations should begin with immediate actions: Inventory existing skills, classify by sensitivity and access requirements, baseline normal execution patterns, isolate high-privilege skills, and monitor for anomalies continuously.

About the authors

The Forward-Looking Threat Research Team of TrendAI™ Research is a group that specializes in scouting technology for one to three years in the future, with a focus on three distinct aspects: technology evolution, its social impacts, and criminal applications. As such, the team has been keeping a close eye on AI and its potential misuses since 2020, when the team authored, in collaboration with Europol and the United Nations Interregional Crime and Justice Research Institute (UNICRI), a research paper on this very topic.

Like it? Add this infographic to your site:

1. Click on the box below. 2. Press Ctrl+A to select all. 3. Press Ctrl+C to copy. 4. Paste the code into your page (Ctrl+V).

Image will appear the same size as you see above.

Messages récents

- The Hidden Risk in Your AI Rollout: Your Endpoints

- When AI Becomes a Zero-Day Machine: What Public Sector Organizations Need to Know

- A Data-Driven View of Cyber Risk Structure: How Attack Pressure and Exposure Shape Damage

- Hunt Them All: An AI-Powered Vulnerability Sweep of 19,000 MCP Servers

- Pwning Agentic AI Part I: Your AI Agent Is Already Compromised

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report It’s By Design: The Use-After-Free of Azure Cloud

It’s By Design: The Use-After-Free of Azure Cloud Ransomware Spotlight: Agenda

Ransomware Spotlight: Agenda Guarding LLMs With a Layered Prompt Injection Representation

Guarding LLMs With a Layered Prompt Injection Representation