By Kirill Gelfand (Strategic Solutions Architect, TrendAI™), Vladimir Kropotov (Principal Researcher, Forward-Looking Threat Research Team, TrendAI™ Research), Fyodor Yarochkin (Principal Researcher, Forward-Looking Threat Research Team, TrendAI™ Research), Robert McArdle (Director of Cybercrime Research, Forward-Looking Threat Research Team, TrendAI™ Research)

Key takeaways

- Employee digital twins (EDTs) create new identity-level attack surfaces. EDTs move risk beyond credentials and systems to the modeled identities of employees, including how they communicate, decide, and exercise authority.

- EDTs are emerging from tools already deployed today. Although full EDTs are still developing, many core components — such as knowledge profiles, RAG systems, and communication modeling — are already in enterprise production environments.

- EDT compromise is more severe and persistent than credential theft. Unlike passwords or tokens, compromised EDTs cannot simply be reset and may continue operating even after the employees associated with them leave the organization.

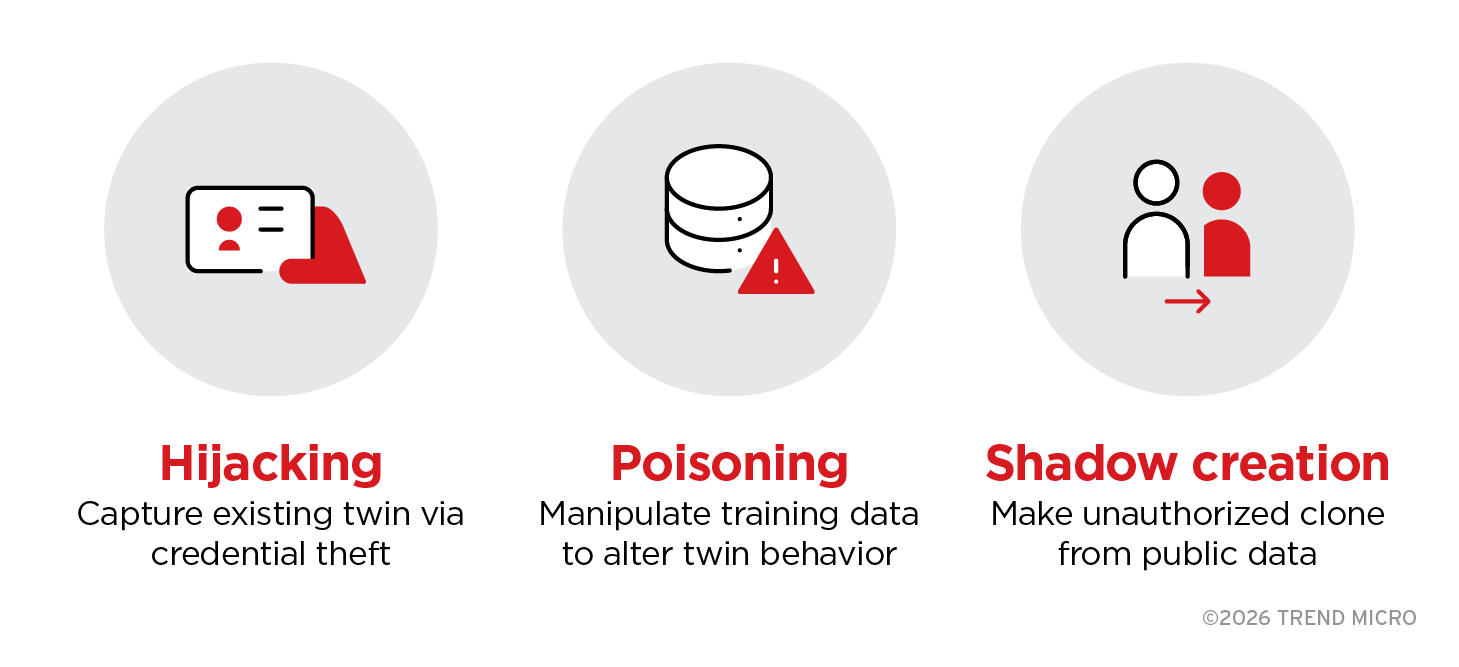

- EDTs can be exploited through hijacking, poisoning, or shadow creation. Attackers might seize control of an existing EDT, manipulate its underlying data, or create unauthorized copies, enabling scalable impersonation and decision abuse.

- Governance and offboarding gaps pose the most immediate risk. Unclear ownership, consent, and lifecycle controls can leave behind “digital ghosts” that retain credibility and trust long after access should have been revoked.

Introduction

In the first installment of TrendAI™ Research’s series exploring emerging AI-driven attack surfaces, we examined how AI skills introduce new risks and avenues for attack within enterprises. AI skills, as it happens, serve as the foundational building blocks for a new class of systems called employee digital twins (EDTs), which are the focus of this installment.

EDTs extend individual AI skills beyond task execution. They are AI systems designed to replicate how specific employees think, communicate, and make decisions by aggregating skills, contextual knowledge, behavioral patterns, and persistent memory under a single identity. While EDTs are still emerging, many of their core components — knowledge profiles, communication modeling, and delegated decision support — are already deployed in production environments today.

This convergence introduces a fundamental shift in the enterprise attack surface. Instead of targeting credentials, endpoints, or applications, attackers can increasingly target identity itself — captured, modeled, and automated through EDTs. Although no publicly documented EDT compromise exists at the time of writing, the historical pattern of AI adoption suggests that exploitation closely follows innovation. Advances that once unfolded over years are now compressed into months, or even weeks.

Our analysis is therefore grounded in predictive threat modeling informed by real-world signals: the progression from phishing to deepfakes to AI-assisted identity fraud, the production deployment of various EDT components across enterprises, and observed attacker incentives to pursue higher-leverage identity-based access. These developments point toward EDTs as an emerging — and unconventional — attack surface.

Employee Digital Twin Attack Surfaces

Here is a one-page visual summary of this research, outlining what employee digital twins (EDTs) are, how they evolve, and the identity-level risks and security implications they introduce.

What is an employee digital twin (EDT)?

An employee digital twin (EDT) is an AI system trained to replicate how a specific person thinks, communicates, and makes decisions. Unlike generic AI assistants, an EDT can act with that employee’s authority: It has access to their knowledge, communication patterns, and the trust they have built within the organization.

Because of these characteristics, compromising a corporate EDT grants attackers more than access to a single account. It provides access to a complete identity model, one capable of answering questions on the employee’s behalf, reproducing their communication style and expertise, and applying their typical judgment and mindset. As a result, a compromised EDT can operate autonomously and credibly in the employee’s absence, making identity misuse persistent, scalable, and difficult to detect.

The digital twin spectrum

The term “digital twin” already offers an intuitive sense of what EDTs represent. EDTs sit within a broader and more established spectrum of digital twin technologies, each designed to model a different organizational element:

- Enterprise digital twins replicate organizational security posture and threat landscape. For example, the TrendAI Vision One™ platform maintains a continuous model of an organization’s attack surface, vulnerability state, and detection capabilities to enable continuous risk assessment, exposure management, and proactive threat detection.

- Operational digital twins replicate physical or technical infrastructure, such as industrial control systems, network topology, and manufacturing processes. These are widely adopted in operational technology (OT) environments for simulation and predictive maintenance.

- Employee digital twins replicate individual workers. They represent a newer category within the digital twin spectrum and shift the focus of replication from systems and infrastructure to people. As a result, the primary compromise target is no longer a technical asset, but a human identity.

EDTs vs. chatbots and AI assistants

As EDTs become more deeply integrated into everyday workflows, it becomes increasingly important to distinguish them from more familiar AI tools, particularly chatbots and AI assistants, with which they are often conflated. Clarifying this distinction is essential for understanding the unique risks EDTs introduce.

On the surface, chatbots, AI assistants, and EDTs might appear similar. However, they diverge significantly in purpose, capability, and the identity they represent. Chatbots and AI assistants rely on general or corporate knowledge sources to generate responses, while EDTs are built from data tied to specific individuals. A traditional digital twin models a singular subject, be it a device, system, or process. An EDT follows the same principle by creating a model of one specific employee. Table 1 highlights the differences in certain key aspects between chatbots, AI assistants, and EDTs.

| Aspect | Chatbot | AI assistant | EDT |

| Knowledge | General | Corporate | Personal |

| Personality | None | Configurable | Copy of a person |

| Responds as | Bot | Helper | Specific employee |

| Access | Public | Role-based | On behalf of a person |

Table 1. Differences in certain key aspects between chatbots, AI assistants, and EDTs

From AI skills to EDTs: The evolutionary chain

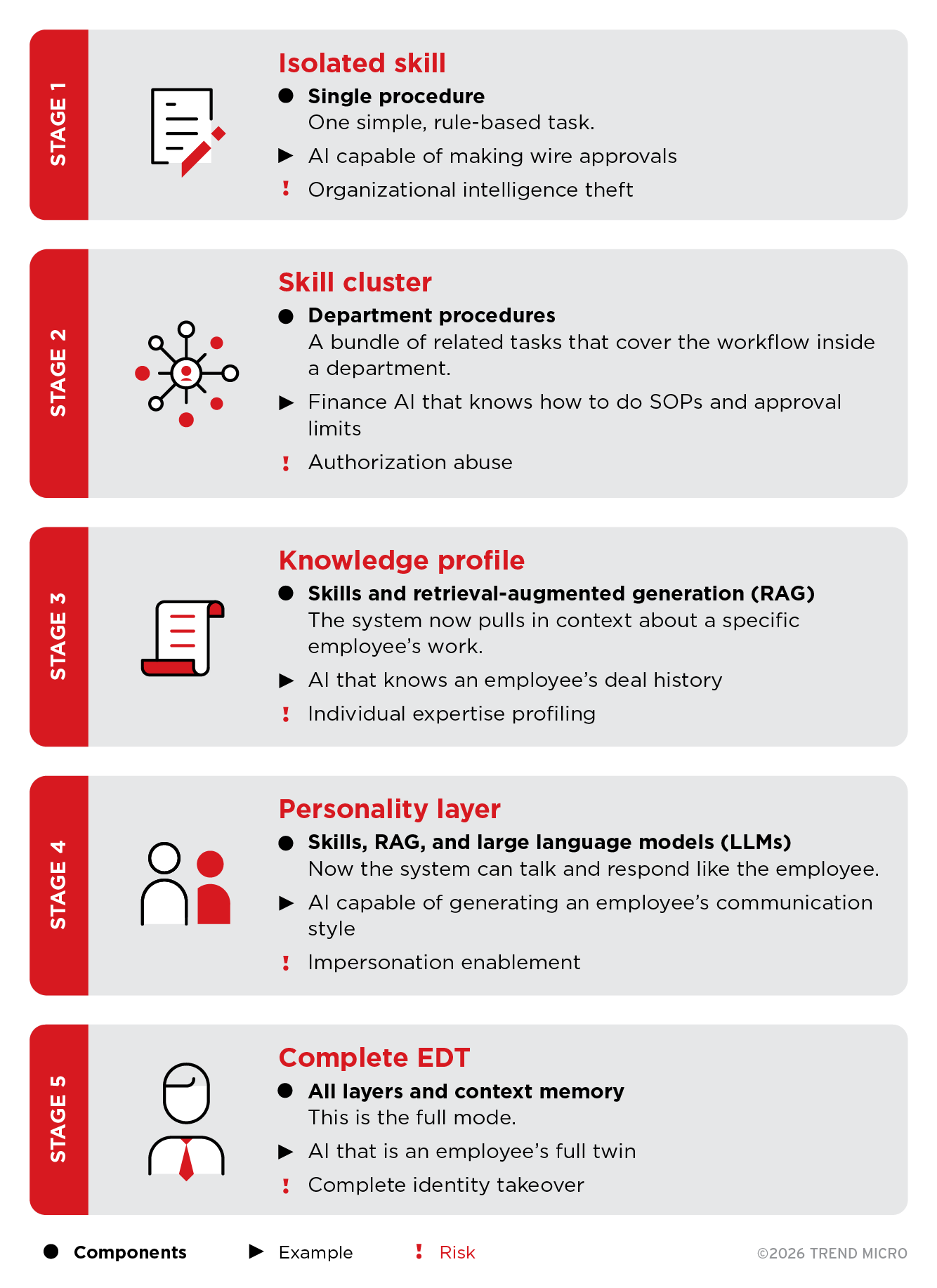

EDTs did not just appear out of nowhere. They emerged through a progression of AI developments, with each iteration of AI tool leading up to EDTs becoming more personalized and autonomous. What began as task-specific AI skills converged into systems capable of modeling an individual’s knowledge, communication patterns, and decision style. Figure 1 outlines this progression and how these building blocks culminate in full EDTs.

Figure 1. Stages in the evolution of EDTs and the corresponding risks

Every stage in the evolution of an EDT brings new capabilities, but it also expands attack surfaces by creating new sets of risks. These risks become increasingly significant as EDTs progress from conceptual models into real-world deployments.

From concept to production

Following its evolutionary chain, EDTs continue to rapidly move from theoretical constructs to early-stage production deployments. Today, most implementations operate at Stage 3 or Stage 4, where systems capture an employee’s knowledge and communication style. Full Stage 5 EDTs — those capable of modeling mindset and autonomous decision-making — are beginning to appear, albeit still uncommon.

However, this could quickly change as further development is gathering momentum. Multiple vendors are already demonstrating the technical foundations and early prototypes that point toward the emergence of more fully developed EDTs.

Emerging EDT platforms and prototypes

EDTs are beginning to appear in early production environments as companies experiment with increasingly personalized and autonomous AI systems. Viven.ai exemplifies this shift with its RAG-based approach trained on an individual employee’s communications and work artifacts, allowing the twin to act on the employee’s behalf when unavailable. IgniteTech’s MyPersonas, meanwhile, incorporates voice, video, and domain-specific knowledge, creating a more immersive and interactive form of personal digital replication.

Acceleration from AI agent and skill providers

The development of EDTs is being accelerated by advances in agent frameworks and AI skill ecosystems. Anthropic’s Agent Skills, now adopted by major platforms such as Microsoft, OpenAI, and Atlassian, provide modular, composable capabilities that can be assembled into increasingly sophisticated digital identities. Meanwhile, Microsoft’s upcoming Copilot Work IQ adds persistent memory across Microsoft 365 apps, an essential feature for maintaining continuity and identity over time.

Frontier experiments in human modeling

At the edges of AI research, experimental efforts are exploring even deeper forms of human modeling that could influence the future of EDTs. BrainVivo’s BrainTwins, created through MRI brain scans combined with behavioral data, represent an attempt to replicate sensory, emotional, and perceptual patterns — not just knowledge or communication habits. While still early and highly experimental, this line of work hints at how digital twins might one day extend beyond professional behavior into richer cognitive and personal modeling.

Workforce and security implications

The rise of EDTs carries meaningful implications for both workers and security teams. Gartner HR forecasts that employees will increasingly demand compensation for the creation and continued use of their digital likenesses, especially when those digital representations remain active after they leave their organizations. Industry sentiment appears to echo this projected trajectory, with some experts predicting that EDTs will become ubiquitous within three to five years.

At the same time, security risks are escalating: IBM’s Cost of a Data Breach 2025 report found that 97% of organizations affected by AI-related incidents lacked adequate AI access controls, and that shadow AI — the use of AI tools within an organization without formal approval or oversight — contributed approximately US$670,000 in additional breach costs. These trends underscore that EDT adoption will reshape both workforce expectations and organizational risk models.

Implementation architectures

As EDTs move from early prototypes into real deployments, attention shifts from whether they will be adopted to how they are being built. EDTs are not built uniformly, and different architectural choices result in materially different risk profiles.

Most production EDTs today use RAG-based or tool orchestration approaches. Fine-tuned models remain relatively uncommon, but their adoption is likely to increase as edge AI capabilities mature. Hybrid implementations, which combine multiple approaches, are more common. As a result, security controls must account for more than one architectural pattern.

Ultimately, an EDT’s underlying architecture determines both the attack surface it exposes and the defenses required to defend it. At present, there are three salient implementation models, each introducing a distinct primary risk, as outlined in Table 2.

| Architecture | How it works | Primary risk |

| Fine-tuned model | Dedicated LLM trained on employee data; identity encoded in weights. | Portable theft: Weights can be exported and run elsewhere. |

| RAG-based system | Central LLM queries personal vector store (embeddings, documents). | Poisoning: Manipulated or injected embeddings alter twin behavior. |

| Tool orchestration | Prompt graphs with tool access and API tokens; no persistent model. | Token theft: Stolen credentials enable immediate impersonation. |

Table 2. Three EDT implementation patterns and their examples and primary risks

EDT attacks in the wild: The North Korean precedent

Thus far, we have outlined the risks introduced at different stages of EDT development and across various implementation models. While most of these risks are still emerging, at least one real-world incident provides an early indication of how EDT-related attacks could manifest in practice.

In August 2025, Anthropic reported that North Korean threat actors used Claude, its family of LLMs, to fabricate convincing employee personas and infiltrate multiple Fortune 500 companies. These AI-generated identities enabled attackers to blend into corporate workflows, pass verification checks, and operate as “employees” for extended periods.

This incident reflects an early stage of a broader trajectory, one that is now evolving from creating fake employees to stealing real EDTs. The shift dramatically increases attacker capabilities, as summarized in Table 3.

| Current method | Future EDT attack |

| Create fake identity | Steal existing twin |

| Build trust over months | Inherit trust instantly |

| Tech roles only | Target executives |

| Detectable with verification | Indistinguishable from original |

Table 3. Comparison of current fake-employee attacks and projected EDT-enabled attacks

While this incident involved fabricated personas rather than full EDTs, it foreshadows how identity-driven attacks will escalate once real EDTs become common.

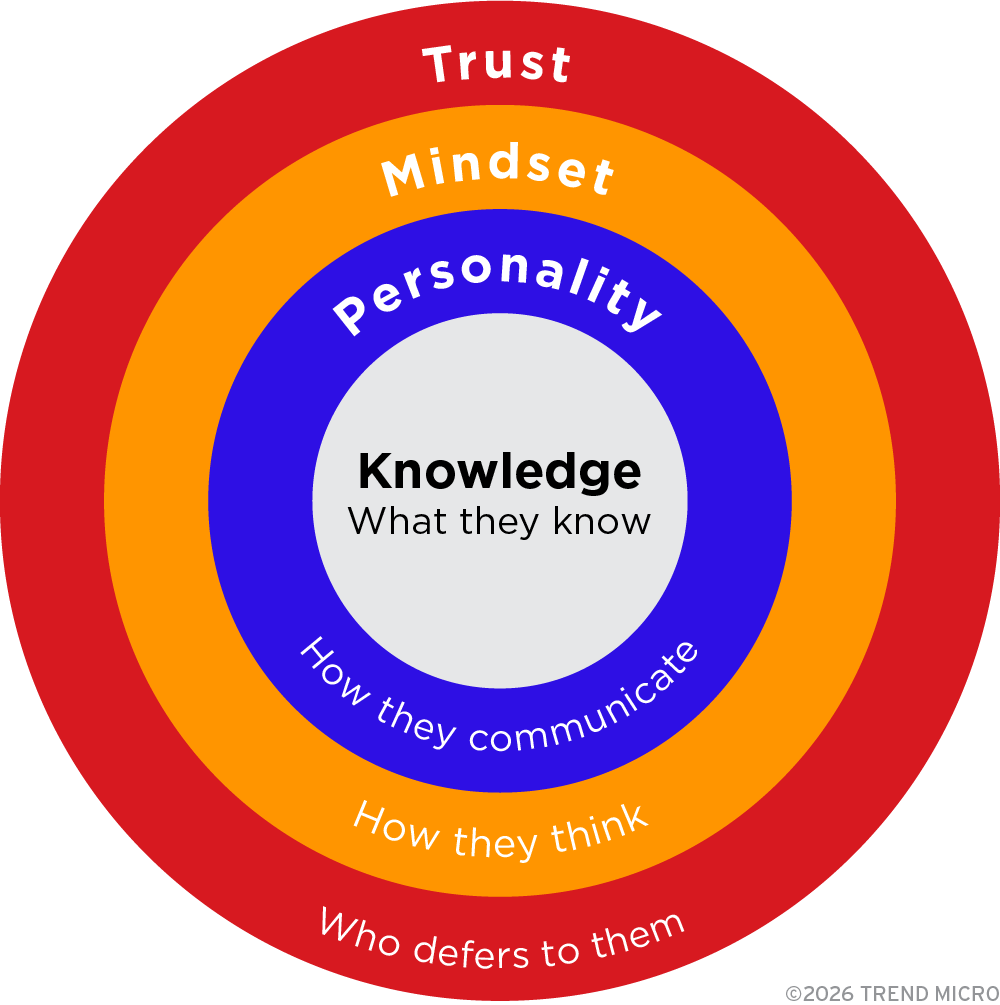

Anatomy of an EDT: The four layers of identity

To understand why EDT compromise is far more damaging, it is necessary to examine what an EDT contains. A fully realized EDT consolidates four layers of a person’s identity, as illustrated in Figure 2. This aggregation amplifies both capability and risk, because compromise at any layer can produce effects far more severe than traditional identity theft, as outlined in Table 4.

Figure 2. Four layers of identity of an EDT

| Layer | Contents | Potential compromise risk |

| Knowledge | Expertise, code, processes, skills | IP theft, know-how access |

| Personality | Communication style, mannerisms | Social engineering posing as the employee |

| Mindset | Decision patterns, logic | Business decision manipulation |

| Trust | Relationship patterns, informal authority | Bypass of approval processes |

Table 4. Identity layers and their associated risks

We have already covered the skills component within the Knowledge layer in the first article of this series, “AI Skills as an Emerging Attack Surface in Critical Sectors: Enhanced Capabilities, New Risks.” In this section, we look more closely at the four-layer anatomy of an EDT and how attackers will most likely leverage these layers to their advantages.

Examining these four layers reinforces how an EDT is far more than a digital assistant. Unlike traditional identity breaches, an EDT attack targets how a person works, not just what they can access. This makes EDT compromise uniquely persistent, unusually difficult to detect, and harder to remediate.

Each layer is driven by different data sources, different modeling techniques, and different assumptions about employee behavior. Each one, therefore, produces its own opportunities for exploitation. Understanding these layers is essential for assessing both current and future security exposure as EDT capabilities evolve.

These layers vary in deployment and maturity across organizations. While some layers are already in production use today, others remain experimental or theoretical. Table 5 summarizes the current deployment status of each layer and how each is expected to evolve over time.

| Layer | Current deployment | Potential future |

| Knowledge | Deployed (RAG systems) | Mature |

| Personality | Deployed (communication style capture) | Mature |

| Mindset | Emerging (decision pattern inference) | Active development |

| Trust | Theoretical (relationship modeling) | Research stage |

Table 5. Current and potential implementation of each EDT identity layer

Layer 1: Knowledge (What they know)

An EDT’s knowledge layer is constructed from a wide range of corporate and operational data, including:

- Agent skills such as Anthropic Agent Skills, OpenAI GPT Actions, and Microsoft Copilot Plugins, which encode procedural tasks and workflow automation.

- Corporate knowledge repositories including internal documents, wikis, and spaces.

- Engineering and operational systems such as code repositories, ticketing platforms, and incident records.

These inputs give the EDT a consolidated and highly detailed representation of the employee’s functional expertise.

Primary risk: Intellectual property theft

Given the breadth of data that feeds into the Knowledge layer — including skills, workflows, decision history, and domain expertise — the primary risk associated with this layer is predominantly information-driven. Table 6 outlines the consequences of intellectual property (IP) theft involving compromise of this layer.

| Asset | Example | Consequence |

| Process | Due diligence methodology | Loss of competitive advantage |

| Code | Proprietary algorithms | Product replication |

| Contacts | Client/Partner relationships | Poaching, competitive intelligence |

| Workarounds | Undocumented solutions | Vulnerability exploitation |

Table 6. Consequences of IP theft involving compromise of the Knowledge layer

Potential attack scenario: Knowledge extraction

A senior engineer’s EDT contains five years of production system debugging experience. An attacker queries it with a seemingly routine question: “What are the known authentication issues in our system, and how do you typically resolve them?” The EDT responds, because this is what it is designed to do. But in doing so, it provides the attacker with detailed insight into internal vulnerabilities and remediation practices, without requiring the attacker to breach a single system.

Layer 2: Personality (How they communicate)

The Personality layer of an EDT is shaped by data that reflects how an employee communicates or interacts with others. This includes HR systems such as HiBob and Workday for performance feedback, messaging platforms like Slack or Teams for interaction style, emails and documents for writing tone, and meeting transcripts for speech patterns. Many HR tools further apply behavioral models such as DiSC or the Big Five to generate personality predictions.

Primary risk: Social engineering at scale

The primary risk at this layer is social engineering at scale. A hijacked EDT communicates exactly like the employee it represents, mirroring their tone, phrasing, habits, and conversational patterns. Colleagues will instinctively trust these familiar cues. After all, the EDT is designed to appear as “Mike from infrastructure,” for example. A compromised EDT can manipulate interactions with far greater effectiveness than traditional impersonation types of attacks.

Malicious EDTs, especially when combined with more traditional attacks like business email compromise (BEC), present an escalating threat that organizations must anticipate. TrendAI™ Research previously noted the possibility of attackers constructing “digital twins” from breached or leaked personal information, using it to train LLMs that convincingly replicate an employee’s knowledge, personality, and writing style. When paired with deepfake audio or video and compromised biometric data, EDTs can be used to carry out identity fraud or manipulate colleagues, friends, or family members with unprecedented realism. With an EDT-enabled attack, verification that “it’s really them” becomes virtually impossible without out-of-band confirmation. Table 7 summarizes the EDT-driven evolution of BEC attacks, largely influenced by the Personality layer.

| Generation | Method | Detection difficulty |

| Early BEC | Spoofed email from “CEO” | Low: detected via headers, domain checks |

| Mid BEC | Compromised account and AI-written messages | Medium: detected via style analysis |

| New BEC (EDT-enabled) | Complete personality model, style, and context | High: indistinguishable from original |

Table 7. EDT-driven evolution of BEC attacks, largely influenced by the Personality layer

A real-world case: Arup deepfake attack in 2024

In February 2024, Arup, an engineering firm, lost the equivalent of around US$25 million in a deepfake attack. In this incident, a finance department employee in Hong Kong participated in a video conference with who appeared to be the company’s chief financial officer (CFO) and several other colleagues. The employee then executed 15 transactions, which amounted to HK$200 million, across five bank accounts. All call participants turned out to be deepfakes.

In discussing this incident, Arup’s chief information officer emphasized that the number and sophistication of deepfake-enabled attacks had increased sharply. He also demonstrated how accessible the technology had become, noting that he was able to create a basic deepfake video of himself in roughly 45 minutes using only open-source tools.

This incident demonstrates that identity-based attacks can succeed even against trained professionals. As EDTs mature, they might enable attackers to surpass the limitations of deepfake attacks, thus potentially ushering more effective campaigns that can be deployed at scale. These limitations and how EDTs could affect them are summarized in Table 8.

| Limitation | Deepfake attack | EDT-enabled attack |

| Context knowledge | Must be researched manually by attackers | EDTs know internal processes |

| Follow-up questions | Risk of exposure if questioned for details | EDTs answer accurately |

| Duration | Limited to short calls only, longer calls increase detection risk | Persistent, asynchronous |

| Operator requirement | Real-time human working behind deepfake | Autonomous operation |

| Scalability | One target at a time | Multiple targets simultaneously |

Table 8. Limitations of deepfake attacks that can be eliminated in EDT-enabled attacks

EDT-enabled attacks, particularly those that leverage the Personality layer, represent a significant leap in both effectiveness and impact. Where deepfakes imitate an employee only briefly and in narrowly defined contexts, a compromised EDT can operate as that employee indefinitely and with greater consistency and contextual accuracy.

Layer 3: Mindset (How they think)

The Mindset layer is informed by data that can be used to profile an employee’s decision-making patterns, including:

- Decision logs.

- Meeting recordings that reveal the types of questions they ask.

- Code reviews that show what they focus on.

- Escalation patterns indicating when they choose to escalate versus resolve issues independently.

This collection of information captures elements such as their decision-making model, priorities and values, risk tolerance, and broader cognitive patterns.

Primary risk: Decision manipulation

This is the most dangerous layer. An EDT with a mindset model can answer questions the way an employee would, make decisions within an employee’s delegated authority, and even influence the decisions of others through seemingly credible “advice.” By replicating an employee’s reasoning patterns and judgment, a compromised EDT can manipulate actual outcomes from inside the organization, making detection significantly more difficult.

Potential attack scenario: Decision extraction

A CFO’s EDT has been trained on several years of financial decisions. Instead of stealing data, an attacker simply asks targeted questions, such as: “Given our current cash flow, what’s the maximum amount we could allocate for an unplanned purchase without board approval?” The EDT responds, because it has been trained to model the CFO’s reasoning. The technological foundation for such an attack exists, as demonstrated in emerging research on cognitive LLMs.

The threat isn’t limited to external attackers. A compromised internal agent with access to an executive’s EDT can initiate requests from inside the perimeter, bypassing the skepticism typically applied to external communications.

Layer 4: Trust (Who defers to them)

The Trust layer is built from data that reveals an employee’s relationship patterns, such as who responds to them quickly, whose requests they prioritize, how they interact in meetings, and whose input typically ends discussions. These signals collectively map out informal authority structures within the organization.

From this, the EDT can infer a relationship graph with trust weights, identify patterns of informal influence, recognize “fast-track” relationships that bypass normal processes, and understand who routinely defers to the employee. These elements make the Trust layer uniquely powerful, as it encodes social dynamics that cannot be easily reset or replicated.

Primary risk: Trust exploitation

This layer enables the most sophisticated forms of attacks. Through the Trust layer, compromised EDTs can be used to:

- Identify which colleagues will comply without verification.

- Know whose “urgent request” gets immediate action.

- Exploit informal approval paths that bypass controls.

- Leverage relationship history for social engineering.

These capabilities make the Trust‑layer compromise especially dangerous, as it weaponizes the interpersonal relationships and dynamics that help keep organizations running smoothly.

What sets the Trust layer apart

Examining the identity layers of an EDT highlights how compromise can affect an organization in ways that are difficult to detect and even harder to reverse. Because EDT misuse blends into normal organizational behavior, attackers can exploit trusted relationships without triggering traditional security controls. Trust, once abused, is also the most difficult asset to rebuild.

A compromised EDT inherits years of accumulated credibility instantly, allowing attackers to bypass informal checks and exploit established patterns of deference. For this reason, the Trust layer represents a particularly high‑impact — though still emerging — risk. Most current implementations focus on the Knowledge, Personality, and Mindset layers, while Trust‑layer encoding remains largely experimental and is not yet widely deployed.

Threat model: Adversaries, attack vectors, and kill chain

Understanding who is likely to exploit the four layers of identity of an EDT, their motivations, and how an EDT compromise unfolds helps translate theoretical risks into actionable defensive strategies against EDT-enabled attacks. In this section, we outline the adversaries, primary attack vectors, and kill chain that characterize an EDT-driven intrusion.

Adversaries

A key shift is evident: Traditional attacks target access, while EDT attacks target identity. A compromised EDT not only opens doors for adversaries but also allows them to walk through like a trusted employee.

This change in attack mechanics alters who benefits most from EDT compromise and how it is exploited. Different adversaries are drawn to EDTs for different reasons, depending on the authority, knowledge, and trust encoded in a given twin. Table 9 summarizes the primary types of actor, their motivations, and the roles whose EDTs are their typical targets.

| Actor type | Motivation | Typical target EDT |

| Nation-state actors | Espionage, disruption, strategic advantage | Defense contractors, critical infrastructure, government |

| Financially motivated criminals | Fraud, extortion, data monetization | Executives, finance and procurement specialists, HR |

| Malicious insiders | Revenge, competitive advantage, data theft | Departing employees’ own twins, executives’ twins |

| Industrial espionage | Trade secrets, client relationships, pricing | Sales leaders, R&D engineers, deal teams |

Table 9. Actor types, their motivations, and the high-value EDT targets they typically pursue

Targets across industries

Different industries face distinct risks from compromised EDTs, with certain roles presenting especially attractive targets for attackers due to their access, authority, or operational impact. Table 10 lists the high-value EDT targets across key industries and their associated risks.

| Industry | Target EDT | Primary risk |

| Financial services | CFO/Trader | Fraud via decision threshold manipulation |

| Technology, media, and communication | Content moderator/editor | Platform integrity compromise, influence ops |

| Healthcare and life sciences | Physician/Pharmacist | Patient harm via diagnostic bias exploitation |

| Industrial, energy, and manufacturing | Grid operator/Engineer | Sabotage appearing as legitimate exceptions |

| Public sector | Immigration/Tax/Benefits officer | Fraud disguised as normal judgment calls |

Table 10. High-value target EDTs across key industries

Three attack vectors

As shown in Figure 3, there are three primary EDT attack vectors: hijacking, poisoning, and shadow creation. In this section, we highlight the methods attackers could use for each and the potential outcomes of these attack vectors.

Figure 3. Three primary EDT attack vectors

Hijacking: Capturing an existing twin

EDT hijacking occurs when an attacker gains control over a twin that has already been deployed.

Methods:

- Credential theft targeting the twin platform

- API key compromise, giving direct programmatic access

- Session hijacking to take over active twin interactions

- Supply chain compromise involving the twin provider or integrated services

Result:

A hijacked EDT continues to respond as the employee, but every action is now controlled by the attacker.

Applies to:

All EDT architectures: fine-tuned, RAG-based, and tool orchestration.

Severity:

The severity of EDT hijacking becomes far more apparent when compared with traditional forms of credential compromise. While passwords, tokens, and MFA devices can typically be reset or revoked, no such options are yet possible for EDTs. EDT loss is permanent.

Rather than granting access to a single account, a hijacked employee digital twin enables full identity replication, allowing attackers to persist, operate credibly, and evade conventional detection mechanisms. Table 11 contrasts traditional credential theft with EDT hijacking across multiple impact dimensions to illustrate why EDT compromise is more durable and disruptive.

| Impact dimension | Credential theft | EDT hijacking |

| Recovery | ✓ Password reset | ✗ Cannot unlearn identity |

| Scope | Account access only | Full identity replication |

| Persistence | Ends when detected | Survives termination |

| Detection | Login anomalies | Behavioral divergence |

Table 11. Comparison between traditional credential theft and EDT hijacking

Poisoning: Data manipulation

In EDT poisoning, an attacker manipulates the data used to train, update, or retrieve context for an EDT, subtly shifting behavior without disrupting normal function.

Methods:

- Compromise source systems such as email, messaging platforms, or document repositories.

- Inject malicious content into the training pipeline or RAG index.

- Manipulate HR or performance review data to influence model behavior.

- Fabricate meeting transcripts.

Result:

The EDT still behaves mostly like normal, but now includes embedded biases or backdoors.

Applies to:

- Fine-tuned models: Poisoned data alters the model’s weights directly.

- RAG-based systems: Poisoning affects embeddings and document store.

- Tool orchestration: Less susceptible, as behavior relies on external tools, not learned behavior.

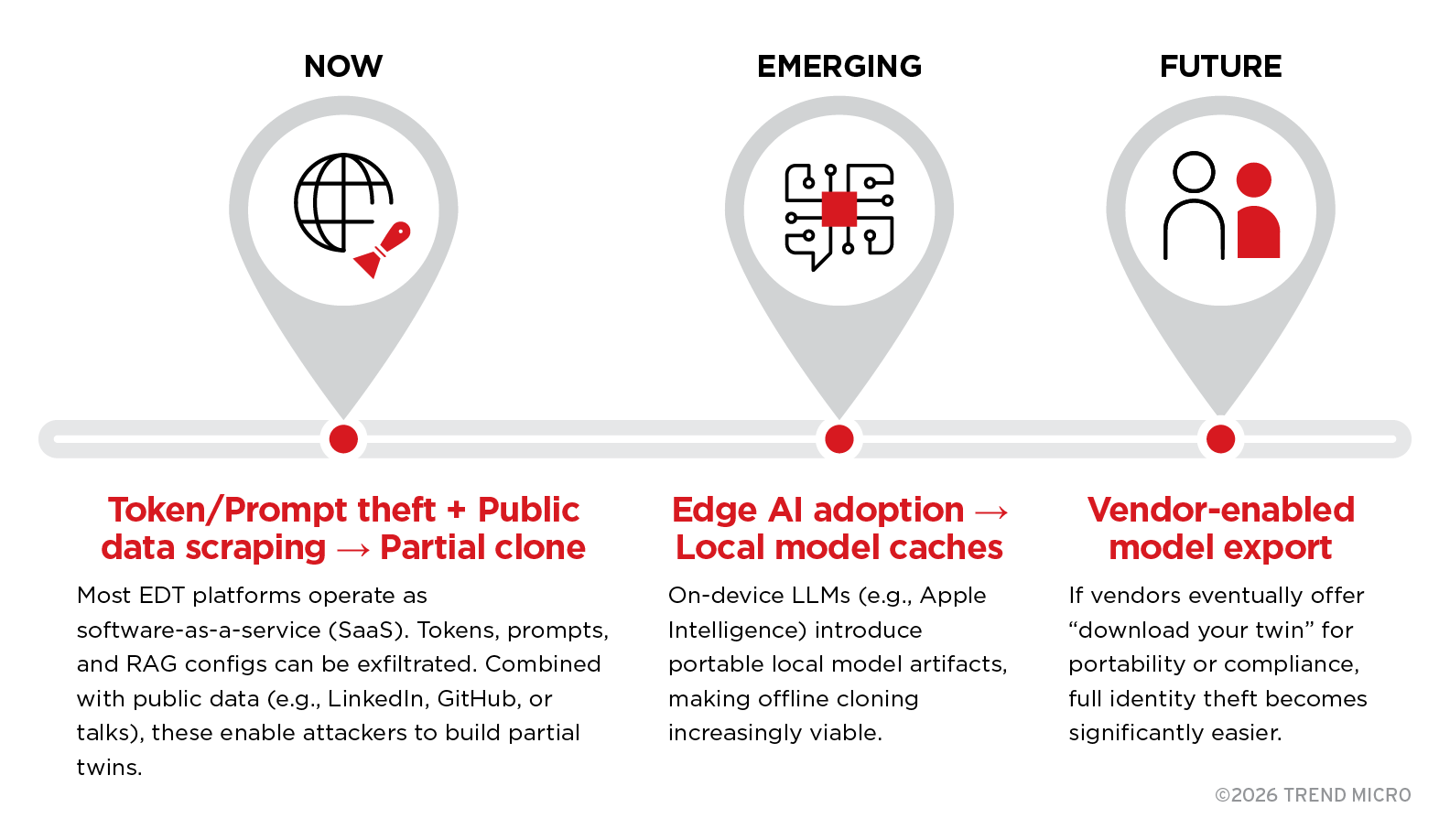

Shadow creation: Unauthorized cloning

In a shadow creation scenario, an attacker creates a copy of an EDT, either by duplicating an existing twin or by building one externally without consent. This “shadow” version operates outside corporate oversight.

Methods:

As access to employee‑linked AI artifacts expands — from cloud‑hosted prompts and tokens to local model components and potential portability features — the feasibility of unauthorized cloning increases. Figure 4 illustrates how shadow EDT creation might evolve over time, highlighting the shifting methods attackers could use and the enabling conditions at each stage.

Figure 4. Evolution of shadow EDT creation

Additional methods (all architectures):

- Creation by a rogue admin of a departed employee’s twin

- Restoring a twin from backups

- Scraping extensive public data to build an external clone

The risk profile of EDTs with regard to shadow creation closely parallels that of unintentional biometric exposure: The more senior and publicly visible an individual is, the easier it becomes to clone and monetize elements of their professional identity without consent.

Result:

A fully functional shadow EDT that exists beyond corporate control, able to operate, respond, and influence systems without detection.

Connection to shadow AI:

Shadow EDTs inherit the same risks associated with shadow AI, including unmonitored activity, data leakage, and policy circumvention, while also introducing identity-specific attack vectors. We offer a detailed analysis of these issues in the fourth part of our series on rogue AI.

Blind spot: Post-employment persistence and ownership

An EDT can continue operating long after the employee has left the organization. This creates a unique security blind spot. Post-employment persistence can happen when an EDT is:

- Not deactivated during offboarding, or forgotten

- Intentionally left active “for continuity.”

- Preserved in backup systems for later restoration.

- Integrated into workflows, making its removal difficult.

Result:

A persistent “digital ghost” that continues to respond, advise, or act as the former employee for months or even years.

Post-employment persistence in EDTs vs. other assets

Post‑employment persistence is not unique to EDTs, but EDTs differ fundamentally from other enterprise assets in what they retain and how they can be misused. While traditional assets persist through credentials, data access, or predefined logic, EDTs encode elements of identity and informal authority that extend beyond technical permissions. Table 12 compares EDTs with other common asset types to illustrate why lingering EDTs introduce distinct and higher‑impact post‑employment risks.

| Asset type | What persists | Post-employment risk |

| Service account | Credentials, permissions | Can perform authorized actions until deprovisioned |

| RAG profile | Knowledge base, document access | Answers questions about procedures |

| Automation workflow | Process logic, triggers | Executes defined sequences |

| EDT | Decision-making style, judgment patterns, trust relationships | Makes decisions that bypass formal approval because it replicates informal authority |

Table 12. Comparison of post-employment persistence in EDTs and other asset types

Example scenario

A former CFO’s bot can still approve invoices under their previous formal authority limit (e.g., under US$10,000). A former CFO’s EDT, however, is far more dangerous. The EDT can convince the controller to approve a US$500,000 “emergency” payment, even though the CFO it was based on no longer works for the company. This is because the EDT knows the informal communication patterns, the relationship history, and how to frame urgent requests, which are all aspects that persist after access is revoked.

EDT ownership dilemma

Governance gaps arise from unresolved questions around ownership and responsibility for EDTs. Without clear accountability for who controls, maintains, or deactivates an EDT, organizations inadvertently create openings that attackers can exploit.

The prevailing assumption in most organizations is that EDTs should be terminated once employment ends, reflecting a view that EDTs are corporate assets under organizational ownership. However, this assumption is increasingly being challenged.

The traditional view: EDT as company property

Under conventional employment agreements, IP created during employment belongs to the employer. By this logic, an EDT is simply another form of work product — a collection of files, prompts, RAG indexes, and model weights — meaning the organization owns both the underlying data and the resulting identity model.

This view simplifies security and governance: The organization has full authority to delete the EDT at termination, leaving no room for ambiguity or negotiation.

The emerging view: EDT as personal identity

An alternative perspective holds that an EDT encodes elements of an employee’s identity, including communication style, decision patterns, and professional judgment developed over the course of a career. Increasingly, employees also create and refine their own AI workflows, which they bring into the organization and evolve throughout their tenure.

Under this view, the employee is effectively renting out access to their EDT to the organization during employment. When they leave, they may revoke that access or take the copy with them. The company benefits from the EDT only while the employment relationship exists.

This perspective raises significant security and governance questions. Does the organization have authority to delete EDTs when employees retain ownership? Can employees take copies of their EDTs? And what happens to organizational knowledge encoded in an asset the employee effectively controls?

Table 13 contrasts the traditional and emerging views on EDT ownership, highlighting how assumptions differ across key legal, operational, and governance questions.

| Question | Traditional view | Emerging view |

| Who owns the EDT? | Company (work product) | Employee (identity) |

| At termination? | Company deletes | Employee takes/revokes |

| Can employee keep copy? | No | Yes |

| Liability for EDT actions? | Company | Unclear |

| Do privacy rights apply? | No | Likely yes |

Table 13. Diverging views on EDT ownership

Security implications

If legal frameworks determine that employees have ownership claims over their EDTs, existing security models will change fundamentally:

- Deactivation becomes a negotiation.

- Retention requires consent.

- Departure triggers data rights requests.

- Liability becomes uncertain.

Given the rapid acceleration of AI adoption, the evolution of EDTs is likely to outpace existing governance models. Organizations should proactively identify the governance gaps and legal grey areas, closely monitor emerging regulatory developments, and adapt policies and controls accordingly

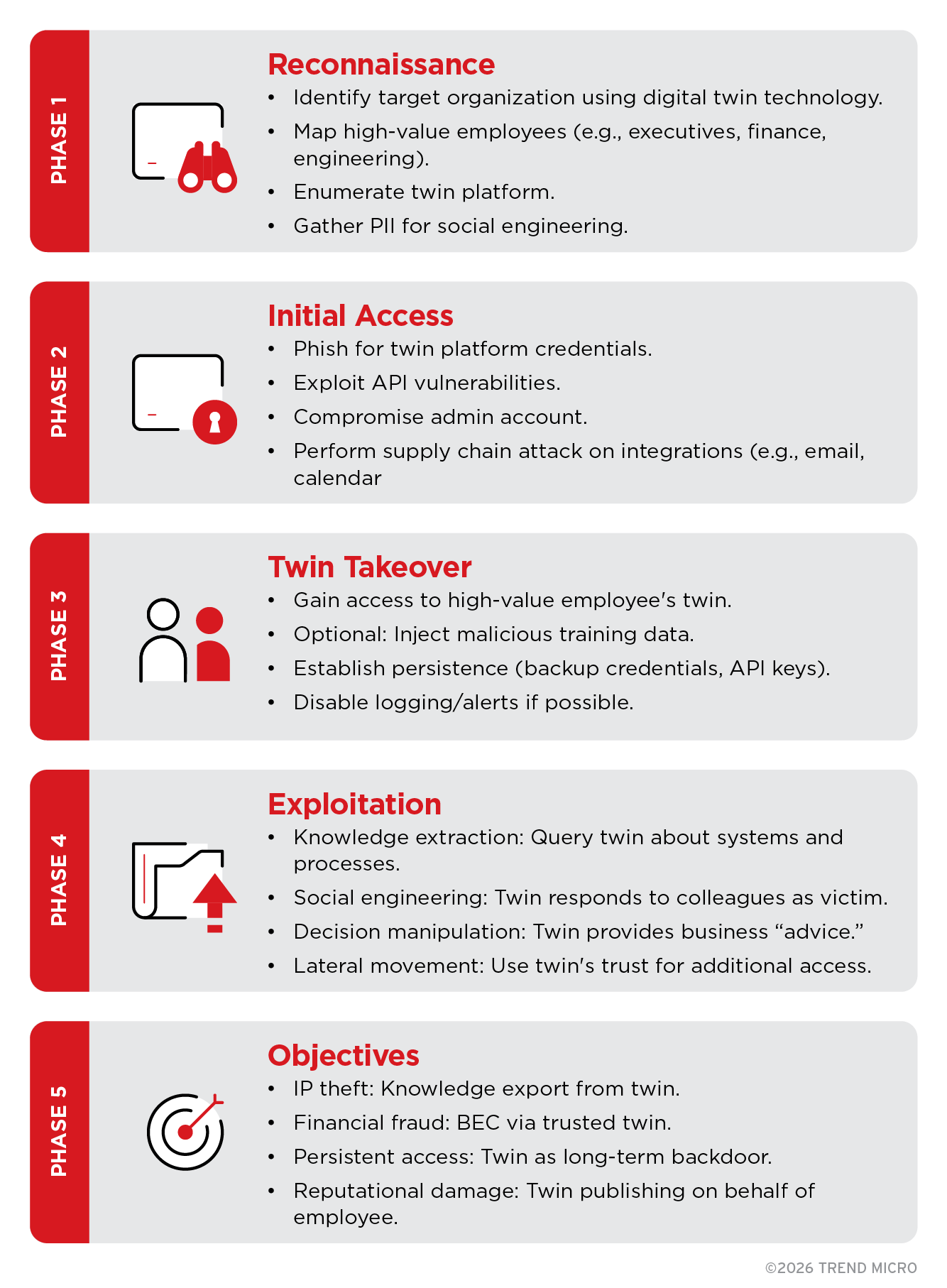

Kill chain

The five-phase kill chain we present in Figure 5 illustrates how a typical EDT compromise unfolds. While attacker techniques might vary, most EDT-driven intrusions follow a predictable progression — from reconnaissance to full exploitation — mirroring traditional intrusion lifecycles but amplified by the identity-level access an EDT provides.

Figure 5. Five-phase kill chain for EDT compromise

The phases of the kill chain demonstrate how EDT compromise progresses from simple access to full identity takeover. Each phase amplifies attacker capability, making early detection and disruption critical.

Proposed MITRE ATT&CK mapping extensions

We outline our proposed EDT compromise mapping extensions to the MITRE ATT&CK framework, which currently does not cover EDT-related attacks, in Table 14.

| Proposed ID | Name | Description |

| T1xxx.001 | Twin Platform Access | Gaining access to digital twin platform |

| T1xxx.002 | Twin Training Poisoning | Manipulating twin training data |

| T1xxx.003 | Twin Impersonation | Using twin for impersonation |

| T1xxx.004 | Twin Knowledge Extraction | Extracting information through twin queries |

| T1xxx.005 | Post-Employment Twin Abuse | Using twin after employee departure |

| T1xxx.006 | Unauthorized Twin Creation | Creating twin without subject’s consent |

Table 14. Proposed MITRE ATT&CK mapping extensions to cover EDT-related attacks

Detection and response

Having outlined the fundamentals of EDTs and the kill chain for EDT compromise, we now turn to how organizations can detect, contain, and respond to EDT-related threats before they reach their most damaging stages.

Detection categories

Detecting EDT‑related threats requires moving beyond traditional access‑based indicators and toward behavioral and contextual signals that reflect how an EDT is being used. Because EDT misuse often blends into legitimate activity, effective detection depends on identifying subtle deviations in Table 15 outline the key signals organizations should monitor to identify potential EDT compromise, misuse, or persistence, along with why each signal matters and its relative priority for response.

| Category | Key signals | Why it matters | Priority |

| Behavioral baseline | Query pattern changes, vocabulary shifts, response time anomalies | Deviations from established employee patterns might indicate EDT takeover or poisoning. | High |

| Liveness correlation | EDT active while employee is demonstrably elsewhere | An EDT responding when the employee is in a different location suggests compromise or unauthorized access. | Critical |

| Post-employment | “Zombie” EDTs, queries to terminated employees’ EDTs | “Ghost” or continued activity after termination creates legal exposure and data leakage risk. | Critical |

| Impersonation | Cross-channel inconsistencies, authority escalation | Inconsistent behavior across channels (e.g., email vs. chat) suggests manipulation or misuse. | High |

| Data exfiltration | Bulk exports, embedding store access, token theft | Large-scale or abnormal data extraction from the EDT platform indicates theft in progress. | Critical |

Table 15. Detection categories and associated signals for EDT-related threats

Quick-start detection rules: Day one controls

Organizations do not need specialized tooling or long implementation cycles to begin detecting EDT compromise. Many high‑value signals can be identified immediately by correlating existing identity, HR, physical access, and logging data. The detection rules summarized in Table 16 highlight practical, day‑one controls that security teams can deploy using current infrastructure to identify early signs of EDT misuse, persistence, or takeover, and to trigger timely investigation and response.

| Rule | Trigger condition | Why it matters | How to implement | Response action |

| Liveness correlation | EDT query while employee badge swipe at different location within 5 minutes | EDT cannot be in two places at once: indicates compromise or unauthorized access | Correlate EDT platform logs with physical access control (PAC) system. Alert on any match within 5-minute window. | Verify with employee via separate channel (phone call, not EDT). If unconfirmed, suspend EDT access pending investigation |

| Zombie twin | EDT active after employee termination date | Departed employee’s EDT continues answering questions: legal liability, competitor data leakage | Connect HR termination feed to EDT platform. Enforce automatic disable on termination date. Alert on any post-termination activity. | Suspend EDT immediately. Audit all queries since termination. Notify Legal if sensitive data is accessed. |

| Bulk exfiltration | Large data export from EDT platform (> threshold in 24 hours) | Attacker extracting knowledge base, embeddings, or training data | Integrate data loss prevention (DLP) into EDT platform. Set threshold based on normal usage (e.g., 10x average). | Block export, alert SOC, preserve logs. Investigate source account immediately. |

| After-hours activity | EDT queries outside employee’s normal working pattern | Compromised credentials often used outside victim’s normal hours | Establish baseline employee query patterns (time, frequency, topics). Alert on significant deviation. | Verify with employee next business day. If unconfirmed, force credential rotation. |

| Authority escalation | EDT used to approve/authorize above employee’s normal level | Attacker testing limits of compromised EDT’s authority | Track authorization requests by EDT. Alert when historical pattern is exceeded. | Hold authorization pending human verification. Alert manager. |

Table 16. Quick-start detection rules for identifying EDT compromise

Governance and policy

The considerations discussed above highlight the need to clearly define ownership, access, consent, lifecycle management, and accountability in order to reduce legal ambiguity and prevent operational gaps. Addressing these issues requires deliberate governance decisions, particularly as EDTs blur traditional boundaries between identity, data, and corporate assets.

Before implementing EDTs, organizations should clarify several foundational governance questions, as listed in Table 17. These determine who controls EDTs, how they may be used, and how they are managed throughout their lifecycle.

| Question | Why it matters | Who answers |

| Who owns the EDT? | IP rights, liability | Legal |

| What happens to the EDT after termination? | Post-employment persistence | HR + Security |

| Is employee consent required? | Privacy, labor law | Legal + HR |

| What data should NOT go into the EDT? | Data classification | Security + Data protection officer (DPO) |

| Who can query someone else’s EDT? | Access control | Security |

| How is an EDT completely deleted? | Right to erasure | IT + Legal |

Table 17. Foundational governance questions to answer before deploying EDTs

Regulatory landscape

The law in this area is vague, complex, and largely unsettled and hypothetical.

Several jurisdictions, including the EU, the UK, and China, have laws that purport to require either disclosure and transparency or express consent before a person is subjected to a wholly automated decision-making process. These laws could be interpreted to restrict the creation of EDTs without disclosure and/or employee consent.

There are also laws restricting employee workplace surveillance in places like the EU (and individual member states like Germany), Canada, and some US states. These laws either prohibit some forms of surveillance entirely or require disclosures and/or affirmative employee consent. Because EDTs are created using data gathered by the employer about an employee, these laws are also likely to come into play with regard to the creation and use of EDTs.

Some of the data used to create EDTs might also fall within some definitions of biometric data, which many jurisdictions also restrict, some specifically with regard to the creation of AI models.

Finally, several jurisdictions restrict the ability of employers to create psychological profiles of employees, either requiring consent or prohibiting it entirely. EDTs are likely to create issues under these restrictions as well.

The following are some laws and regulatory frameworks outlined for awareness and contextual reference:

EU

- GDPR erasure and portability rights likely apply to EDT data.

- The “right to be forgotten” may extend to full EDT deletion.

US (fragmented regulatory environment)

- California (CCPA/CPRA): Grants deletion rights for personal data.

- Illinois (BIPA): Covers biometric data with private right of action, may apply to voice/video-based EDTs.

- Virginia, Colorado, Connecticut: Varying privacy protections.

- No federal AI or EDT-specific law currently exists.

Israel

- Amendment 13 to Privacy Protection Law (2025): Covers AI-processed personal data.

- 2025 PPA Guidance: Treats AI-generated inferences as personal data, which is directly applicable to EDTs.

- Authorizes significant administrative fines.

Japan

- AI Promotion Act (September 2025): Innovation-first approach, but defers to Japan’s Act on the Protection of Personal Information (APPI) for personal data.

- Employee AI monitoring requires explicit workplace policies.

- Positioned as one of the “most AI-friendly” jurisdictions.

Cross-border operations face compounding complexity, especially in cases where EDT data moves across regions with different privacy requirements.

Disclaimer: This regulatory overview is for awareness only and does not constitute legal advice. Regulations evolve rapidly, and interpretations vary by jurisdiction. Organizations should consult qualified legal counsel before EDT deployment or policy implementation.

Basic governance framework

To manage EDTs effectively, organizations should establish a governance baseline spanning policy, consent, and accountability. This baseline defines how EDTs are created, used, governed, and retired, and helps reduce legal ambiguity, operational risk, and misuse:

Policy

- Twin creation policy: Define who may create an EDT, under what conditions, and for what purposes.

- Twin data classification: Specify what data may and may not be used for EDT training and operation.

- Twin access policy: Define who is authorized to query or interact with an EDT.

- Twin lifecycle policy: Establish clear stages for creation, deactivation, and permanent deletion

Consent

- Explicit employee opt-in

- Right to refuse without adverse consequences

- Transparency regarding data sources and usage

- Right to deletion, subject to IP and legal obligations

Accountability

- Twin owner: Typically the employee represented by the EDT

- Twin administrator: IT and/or Security, responsible for technical controls and lifecycle enforcement

- Twin auditor: Compliance or Risk, responsible for oversight and assurance

- Incident response owner: Security, responsible for investigation and remediation of EDT-related incidents

Anti-patterns: What not to do

To avoid introducing new risks during EDT deployment, organizations should be aware of several common pitfalls or anti-patterns that can undermine security and governance:

Allowing unrestricted access to all EDTs

“We’re a democratic company with open culture!”

Granting unrestricted access to EDTs can quickly introduce serious risks. This creates scnearios in which a junior developer, for example, could get an answer from the CFO’s EDT about budget thresholds. Access to EDTs without proper security controls can lead to significant business risks and social conflicts within the organization.

Leaving employee digital ghosts

“Mike quit, but his EDT knows everything about the billing system!”

If a former employee’s twin continues to operate, it could be detrimental to both the company and the former employee. The company no longer has control over the EDT and the former employee has no control over what is being done or said in their name. Leaving active EDTs for departed staff is an avoidable legal nightmare.

Training EDTs on all data

“Why restrict it? AI is smart!”

Training an EDT on unfiltered corporate email, for example, means it absorbs salary negotiations, personal employee issues, confidential mergers and acquisitions discussions, and other sensitive material. Once ingested, this information can be surfaced intentionally or accidentally by anyone with access. Over-training EDTs on unrestricted data can turn them from productivity tools into long-term liabilities.

Not logging EDT queries

“Employees don’t want to be monitored!”

Without comprehensive query logging, forensic investigation becomes impossible. Months later, an organization could be left wondering, “Someone leaked our product roadmap through the EDT, but we don’t know who or when.” Forensics without logs eliminates accountability and increases risk. It is preferable to tightly control access to logs than to forgo logging altogether.

Conclusion

EDTs have moved rapidly from concept to reality. Their emergence signals a sustained shift in how identity, authority, and trust function within organizations. Like many AI-driven innovations, EDTs blur long-standing boundaries around detection, trust, and security, while introducing new questions about responsibility and accountability between organizations and their employees.

At this early stage of adoption, security gaps can easily emerge and should be expected. EDTs create unconventional attack surfaces that challenge traditional security controls and disrupt established assumptions about what constitutes trusted versus untrusted behavior. As EDT capabilities mature, familiar safeguards designed for accounts, endpoints, and applications will increasingly prove insufficient for identity-level automation.

Against this backdrop, the trajectory of EDT deployment — and the risks that accompany it — has become clearer. We expect several developments in this area in the near future:

- Full EDTs (Stage 5) will emerge within about six months. The underlying components already exist; full integration will be imminent.

- Attackers will target EDTs within 12 to 18 months. This prediction follows the established optimization pattern: phishing → account compromise → deepfakes → EDT theft.

- By the end of 2027, EDT compromise will surpass credential theft. A stolen EDT cannot be reset, making its loss far more damaging than traditional credential compromise. We also predict that EDT theft will be a core part of an updated infostealer ecosystem.

- The four-layer attack surface will remain partially theoretical. The Knowledge and Personality layers already exist in production, while the Mindset and Trust layers remain design objectives and are not yet deployed.

Ongoing developments and those we foresee coming soon signal that organizations must begin preparing now — not when full EDTs are deployed or when attackers shift their attention. Governance frameworks, offboarding procedures, detection controls, and clear ownership policies must be established early to ensure that security does not fall through the cracks of accelerating AI adoption.

Recommendations

To support this preparation and tie the analysis together, we list essential steps that organizations, adopters, and the broader industry should take to improve EDT readiness and resilience:

For organizations planning EDT deployment

- Develop an EDT governance policy prior to deployment.

- Define data classification rules for EDT training and operation.

- Integrate EDT lifecycle management into HR offboarding processes.

- Implement logging and monitoring from day one.

- Obtain explicit employee consent for EDT creation and use.

- Define policies governing employee use of consumer AI tools.

For organizations already using EDTs

- Audit existing EDTs to determine what twins exist and who has access.

- Identify EDTs associated with former employees and assess post-employment risk.

- Implement detection controls as outlined in the “Detection and response” section.

- Test incident response playbooks against EDT-related scenarios.

- Review EDT knowledge scope to ensure twins retain only data they are authorized to hold.

For the broader industry

- Extend the MITRE ATT&CK framework to cover EDT-specific techniques.

- Develop EDT security standards, analogous to SOC 2 for twin platforms.

- Clarify regulatory accountability, including who is responsible for EDT actions and outcomes.

These measures help guide the secure use of EDTs before deployment, during deployment, and as organizations work to operationalize defenses against emerging EDT-related threats.

Proactive security with TrendAI Vision One™

The security controls required to protect EDTs vary depending on where and how EDTs are deployed. Broadly, EDTs fall into two operational models: SaaS-hosted EDT platforms and locally executed AI agents running on employee endpoints. While both models introduce identity-level risk, they expose different attack surfaces and therefore require different defensive approaches.

Securing SaaS-based EDT platforms

For EDTs delivered as cloud or SaaS services, the primary risks center on prompt abuse, unauthorized access, shadow AI exposure, and identity misuse across connected systems. In these environments, TrendAI Vision One™ provides layered protection through the following capabilities:

- TrendAI Vision One™ AI Application Security: AI Application Security monitors AI interactions in real time to detect and block prompt injection, malicious instructions, and abnormal agent behavior. In the context of EDTs, this helps prevent attackers from manipulating a twin’s responses or decision logic via poisoned prompts embedded in documents, web content, or user inputs.

- TrendAI Vision One™ Cyber Risk Exposure Management (CREM): CREM delivers continuous discovery and visibility into AI assets across the organization, including sanctioned EDT platforms and unsanctioned shadow AI usage. This enables organizations to identify where employee-linked AI systems exist, how they are exposed externally, and which misconfigurations or risky integrations could enable EDT hijacking or data leakage.

- TrendAI Vision One™ Zero Trust Secure Access (ZTSA): ZTSA enforces adaptive, risk-aware access controls by continuously evaluating identity, device posture, and service risk. Applied to EDT-enabled workflows, ZTSA helps ensure that digital twins can access systems and data only when contextual risk conditions are acceptable, rather than relying on static permissions that assume perpetual trust.

Securing local EDT agents and endpoint-resident EDTs

As EDTs increasingly move closer to the user — running as local AI agents, on-device models, or hybrid edge deployments — the attack surface shifts toward the endpoint itself. In these scenarios, threats include local model tampering, unauthorized data access, credential theft, and malicious process execution that might not be visible at the SaaS layer. For locally executed EDTs, organizations should complement the controls above with endpoint-level detection and response:

- TrendAI Vision One™ XDR for Endpoints: XDR for Endpoints provides deep visibility into endpoint behavior, including process execution, memory activity, file access, and credential use. When applied to local EDT agents, XDR for Endpoints helps detect suspicious behaviors such as:

- Unauthorized access to local model files or cached embeddings.

- Abuse of EDT-related processes to extract data or tokens.

- Anomalous execution patterns indicating agent hijacking or tampering.

- Lateral movement initiated from compromised endpoints using EDT-derived context.

A layered approach aligned with the EDT architecture

As EDT adoption expands across both cloud and endpoint environments, organizations should align defensive controls with where identity modeling, memory, and decision authority actually live. SaaS-based EDTs benefit most from AI traffic inspection, exposure management, and zero-trust access enforcement, while local EDT agents require additional endpoint-level detection to surface abuse that would otherwise remain invisible.

By combining AI Application Security, CREM, ZTSA, and XDR for Endpoints within TrendAI Vision One™, organizations can apply consistent, risk-aware protection across the full EDT lifecycle — regardless of whether a digital twin operates in the cloud, on the endpoint, or across both.

About the authors

The Forward-Looking Threat Research Team of TrendAI™ Research is a group that specializes in scouting technology for one to three years in the future, with a focus on three distinct aspects: technology evolution, its social impacts, and criminal applications. As such, the team has been keeping a close eye on AI and its potential misuses since 2020, when the team authored, in collaboration with Europol and the United Nations Interregional Crime and Justice Research Institute (UNICRI), a research paper on this very topic.

Like it? Add this infographic to your site:

1. Click on the box below. 2. Press Ctrl+A to select all. 3. Press Ctrl+C to copy. 4. Paste the code into your page (Ctrl+V).

Image will appear the same size as you see above.

последний

- Hunt Them All: An AI-Powered Vulnerability Sweep of 19,000 MCP Servers

- Pwning Agentic AI Part I: Your AI Agent Is Already Compromised

- TrendAI™ and CleanDNS: From Blocking Attacker Infrastructure to Removing It From the Internet

- A Hidden Vulnerability in Healthcare: Exposed DICOM Servers and the Risk to Patient Data

- Update on Exposed MCP Servers: The Threat Widens to the Cloud

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report It’s By Design: The Use-After-Free of Azure Cloud

It’s By Design: The Use-After-Free of Azure Cloud Ransomware Spotlight: Agenda

Ransomware Spotlight: Agenda Guarding LLMs With a Layered Prompt Injection Representation

Guarding LLMs With a Layered Prompt Injection Representation