By Rachel Jin, Russell Meyers, Alifiya Sadikali, and Casey Mondoux

Key takeaways

- Agentic AI systems autonomously plan, reason, and act across enterprise environments, introducing unprecedented capabilities and risks by breaking the traditional software paradigm of human-driven execution.

- The adoption of agentic AI shifts cybersecurity priorities, requiring organizations to move beyond traditional defenses and implement new governance strategies that address the autonomous and adaptive nature of these systems.

- TrendAI™ introduces Agentic Governance Gateway to empower organizations to discover, observe, understand, detect, and enforce governance over agentic AI behaviors, ensuring safe and reliable adoption of autonomous AI.

The existence of agentic AI means that for the first time, software can decide and act without being seen. Agentic AI systems can now plan, reason, decide, and ultimately carry out decisions across enterprise environments. They no longer just generate outputs as software was previously limited to doing — they can now call APIs, access sensitive data, trigger workflows, and interact with other systems on their own, among other novel capabilities.

While this has the incredible potential to simplify and automate work flows and processes, it also introduces a new class of risk. Cybersecurity has been built around a simple assumption: software executes what humans decide. With agentic AI, that assumption is no longer valid.

Agentic AI systems differ from traditional software in that they are not fully deterministic; They operate through iterative loops, adapting to new inputs, tool outputs, and changing context. Because they are designed to be autonomously adaptive, actions can evolve beyond their original instructions without direct human oversight at every step. This can, in turn, create outcomes that are difficult to predict, trace, or control.

While this shift in how systems operate creates unprecedented capability and potential, it also introduces a new class of risk that needs to be addressed.

The Agentic AI Security Gap

Agentic systems collapse the traditional attack chain in that a single manipulated instruction through prompt injection, tool misuse, or data poisoning can trigger disproportionate impact. Agency enables malicious intent to cascade rapidly from initial access to data exfiltration and broader system compromise.

Software like OpenClaw accelerates this reality by being able to be deployed within minutes and granted access to data, APIs, and enterprise workflows from day one. At NVIDIA GTC, a keynote demonstration showed how easily agents can be installed and orchestrate complex, multistep workflows across systems in real time.

The combination of ease of deployment and broad access is what makes agentic systems so powerful and so risky at the same time. The accessibility and rapid adoption of agentic AI exposes a critical gap: we are giving software the authority to act without giving security teams visibility into how those actions unfold.

Why Existing Security Models Break

In the agentic era, the control point has shifted. Most current approaches try to extend familiar controls:

- Secure the model

- Secure the application

- Secure the endpoint

But agentic systems don’t operate within a single boundary. Agentic AI operates across interactions; the risk now lives in:

- How agents communicate

- How decisions propagate

- How intent is translated into action

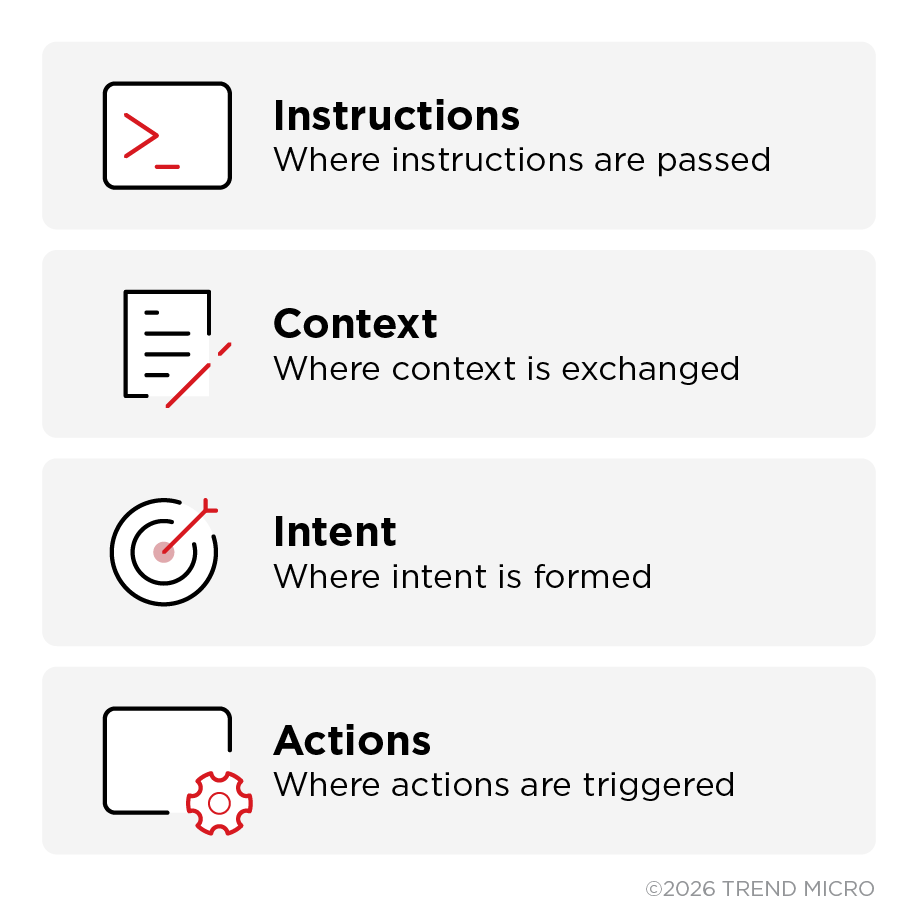

The primary security control point is no longer the endpoint or the application. TrendAI™ identifies this new kind of control point as a checkpoint for how agentic systems interact, exchange information, and take action. This interaction layer is the communication fabric between agents, tools, models, and data. The I/O checkpoint of autonomous systems include where instructions are passed, where context is exchanged, where intent is formed, and where actions are triggered.

Today, that layer is largely ungoverned. If security cannot see and control this layer, it cannot effectively govern what agentic systems are doing.

Advances like confidential computing can now help organizations trust the environment where AI runs to ensure data remains protected, but a new version of the shared responsibility model is emerging. While infrastructure can secure the environment, organizations are now responsible for governing what autonomous systems do with that data.

This means that organizations must ask a few simple questions, the answers to which will determine how well it governs its autonomous systems:

- Does your organization know and have visibility on what its agentic systems are doing?

- Can those actions be trusted?

- Is intervention possible and how early can it be implemented?

Agentic systems represent a step-change in productivity and capability, so decelerating adoption cannot be recommended. The only way forward is to recognize that taking on the responsibility of governing these systems is just as critical as making the decision to deploy them. This is the gap Agentic Governance Gateway is designed to address.

From Security to Agentic Governance Gateway

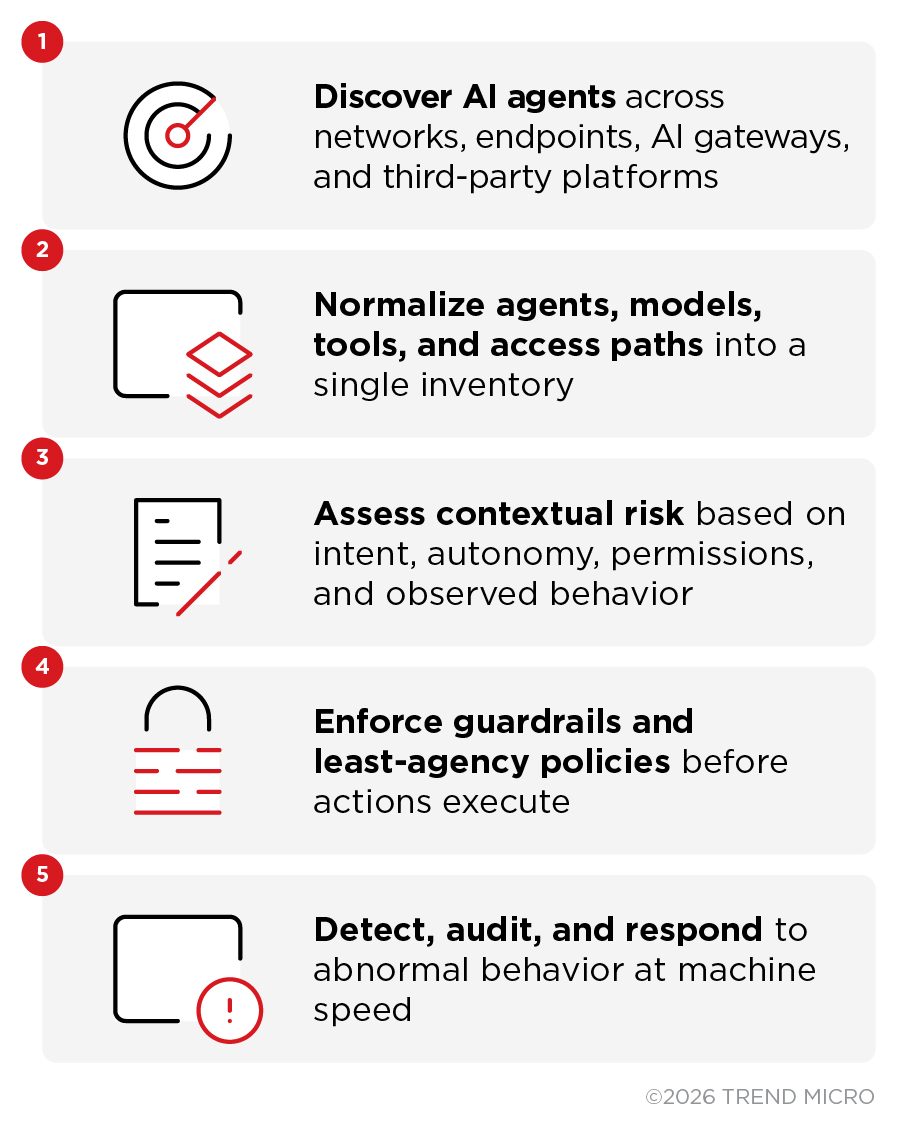

TrendAI™ introduces the Agentic Governance Gateway, where organizations can govern behavior at the point where actions are created. Agentic Governance Gateway can:

- Discover where agents exist and what they can access

- Observe how they interact across systems

- Understand the context and intent behind those interactions

- Detect when behavior deviates from intended outcomes

- Enforce policies and stop unsafe actions

- Introduce human approval at critical decision points

Think of it like air traffic at scale, where each flight makes decisions, changes direction, and interacts with others in real time. The system works because everything is coordinated, not because each individual component is secure in isolation. Without a control layer, you may have powerful systems in motion, but no clear visibility into where they’re going, how they’re interacting, or what happens when paths collide.

Years of ransomware response and breach containment have built strong capabilities in visibility, detection, and response. Agentic systems change where those capabilities must apply; when AI agents can reason, invoke tools, access data, and execute actions without human approval, governance must operate at the interaction layer, where intent is implemented and actions are triggered. Agentic Governance Gateway builds on what already exists by being the control layer providing visibility, coordination, and intervention at the point where decisions turn into actions.

Turning Governance Into Outcomes

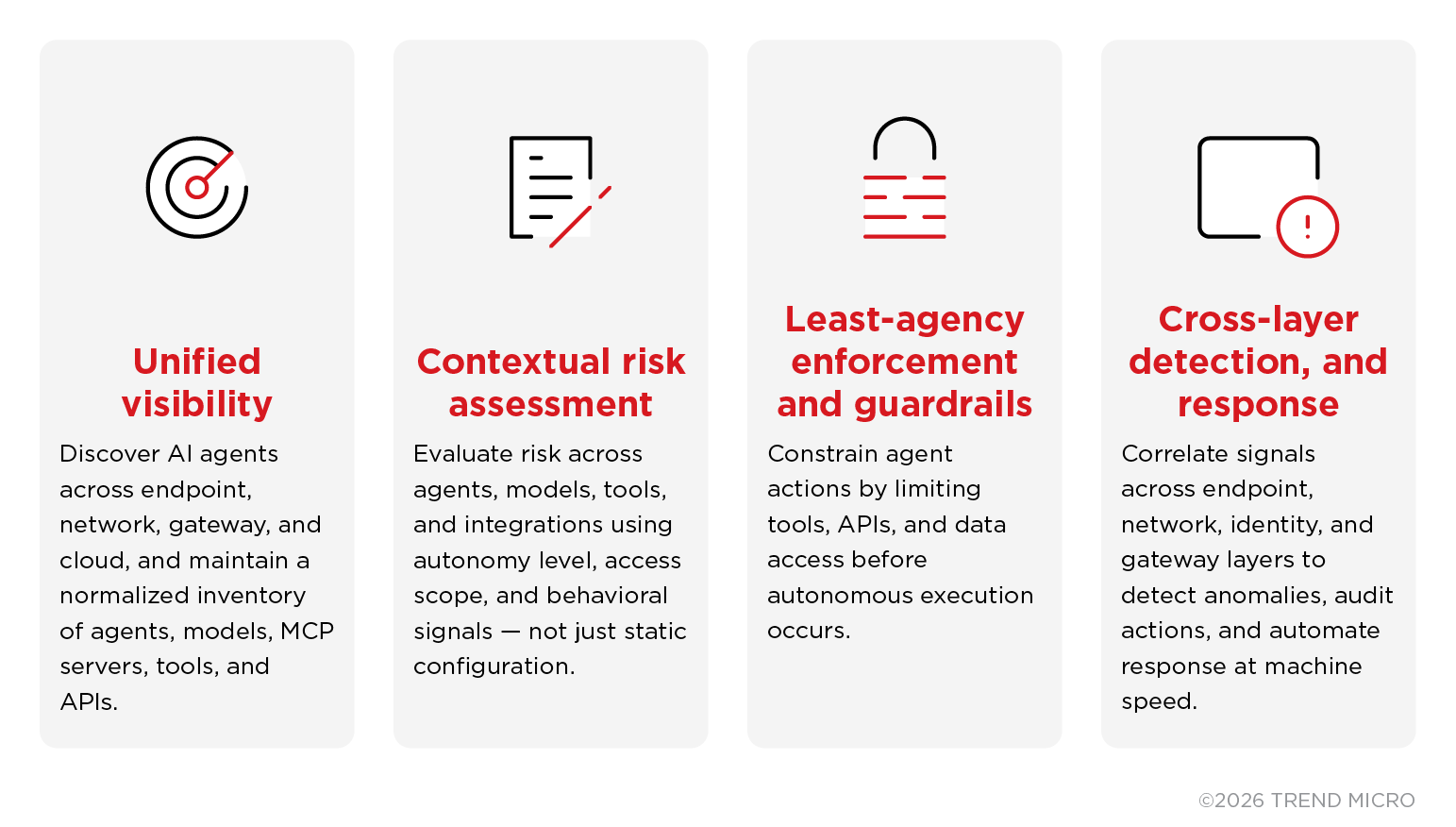

TrendAI Vision One™ extends its long-standing strengths in threat research, endpoint, network detection and response, and AI‑driven analytics to the interaction layer where agentic systems operate.

Built on decades of insight from the Zero Day Initiative (ZDI) and leadership in cross‑layer detection, the platform applies proven capabilities to autonomous AI:

Governance becomes operational by taking these familiar security capabilities and extending them to govern decision-making systems, not just human-driven activity.

With a unified platform approach like TrendAI Vision One™, organizations can begin operationalizing Agentic Governance Gateway by extending proven security controls to autonomous systems:

What CISOs Should do Now

Agentic AI represents a fundamental change in how software behaves. We are moving from protecting systems that produce deterministic outcomes to governing systems that make decisions. Agentic systems are decision-making entities operating across your environment. Security must evolve accordingly.

The transition to agentic systems is already underway and accelerating. To begin governing autonomous AI at scale, CISOs should focus on:

- Building an inventory of AI agents and understanding what they can access and execute

- Applying least‑privilege and least‑agency policies by default

- Treating agent tools, skills, and extensions as supply‑chain risks

- Monitoring interaction and communication flows, not just endpoints

- Introducing guardrails or approval for high‑impact autonomous actions

These steps mark the shift from securing AI components to governing autonomous behavior, the foundation for adopting agentic AI with confidence and control.

AI Fearlessly

The future of AI will not be defined by how fast it moves alone, but by how safely and confidently organizations can use it. The new frontier that comes with the adoption of agentic AI can be adopted with confidence. Agentic Governance Gateway enables organizations to understand what their systems are doing, trust how they operate, and control outcomes when it matters most.

Move forward. Operate with control. Adopt AI Fearlessly.

Like it? Add this infographic to your site:

1. Click on the box below. 2. Press Ctrl+A to select all. 3. Press Ctrl+C to copy. 4. Paste the code into your page (Ctrl+V).

Image will appear the same size as you see above.

Recent Posts

- Hunt Them All: An AI-Powered Vulnerability Sweep of 19,000 MCP Servers

- Pwning Agentic AI Part I: Your AI Agent Is Already Compromised

- TrendAI™ and CleanDNS: From Blocking Attacker Infrastructure to Removing It From the Internet

- A Hidden Vulnerability in Healthcare: Exposed DICOM Servers and the Risk to Patient Data

- Update on Exposed MCP Servers: The Threat Widens to the Cloud

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report

Fault Lines in the AI Ecosystem: TrendAI™ State of AI Security Report It’s By Design: The Use-After-Free of Azure Cloud

It’s By Design: The Use-After-Free of Azure Cloud Ransomware Spotlight: Agenda

Ransomware Spotlight: Agenda Guarding LLMs With a Layered Prompt Injection Representation

Guarding LLMs With a Layered Prompt Injection Representation