The use of audio-only social media apps such as ClubHouse, Riffr, Listen, Audlist, and HearMeOut has been steadily capturing the interest of more and more users over the recent years. But just like any other technology, apps like these are not immune from security risks. Furthermore, most of these risks can be automated, helping attackers propagate these threats easier and faster. It should be noted that these apps are not inherently malicious by themselves; the threats come from cybercriminals looking at ways to exploit these platforms.

In this entry, we demonstrate and outline these risks by analyzing these apps (primarily ClubHouse but also including Riffr, Listen, Audlist, and HearMeOut). We also share some recommendations on how to avoid them. The Stanford Internet Observatory (SIO) has published related but independent research.

Our research has been conducted on February 8–11 this year. At the time of publication, some of the issues described in this document might have been or are currently being fixed by the app vendors. The brands mentioned here have been informed about these findings. We acknowledge that ClubHouse has rapidly responded to the concerns raised by the SIO and other researchers. Also, we have independently obtained and analyzed the software tools used to allegedly “spill audio from ClubHouse,” an episode that was highlighted in the press and promptly blocked by ClubHouse. We want to underline that this wasn’t a security breach. What happened is that a developer has created a mirror website that allowed others to listen in, using the developer’s only account instead of their personal account. While this certainly breaks the terms of service, by no means any specific security weakness has been used and, most importantly, the mirror website was not doing any recording: The audio was still being streamed from ClubHouse servers to the requesting client, and was never going through the mirror website. In other words, that website was nothing more than a client, based on JavaScript as opposed to iOS. Although this type of service abuse can be made more difficult, no web service or social network is immune to them, because there’s no technical way to reliably block abuses without impacting the availability to legitimate users.

Comparing risks between phone calls and audio-centric app calls

Security risks in the use of phones overlap with those for audio-centric apps for calls. Due to their similar nature, both channels can be wiretapped, intercepted, and illegally recorded. Attacks for both can also be automated, only perhaps to a greater extent for online platforms. Both can be abused for blackmail and frauds can be facilitated by readily available deepfake tools. But there are some subtle differences in the risks for these channels.

First is the number of people that can participate in a call, which can dictate the scope of data that can be stolen or how many people can receive incorrect information. While phone calls can only accommodate up to a small group at a time, apps can do so for thousands. For ClubHouse alone, up to 5000 people can join a room — a sizable number even when compared to online but non-voice-centric social networks such as Facebook. This means that, should an attacker decide to steal information from the call participants or ruin a user’s reputation, a crowd of thousands can possibly serve as victims or audiences.

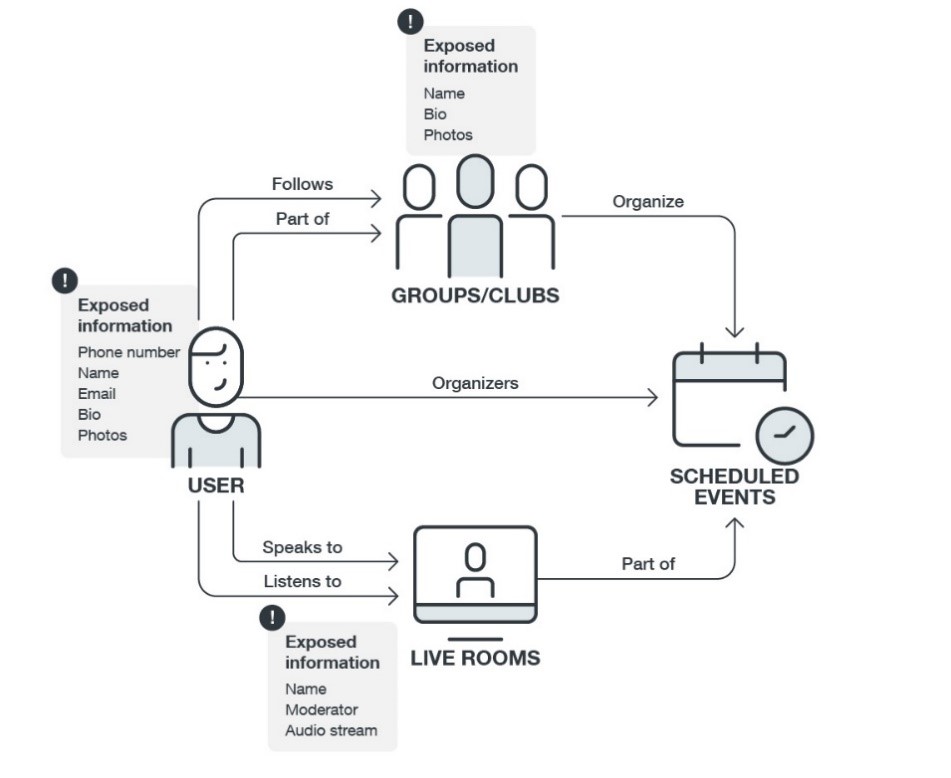

In relation to this, the types of data that can be stolen are also different. Through phone calls, the data that could be stolen pends on what the receivers disclose. In most voice-centric apps, depending on how the users configure their accounts, data such as photo/s, phone numbers, email addresses, and other personally identifiable information (PII) could be readily accessed by potential attackers who also have accounts in these apps.

Another is user impersonation. While callers can also assume another person’s identity in phone calls, this believability is heightened in audio-only social media apps since malicious actors can create fake profiles using the impersonated person’s photo and information.

Also, voice-centric apps, like some online platforms, can be used to start covert channels for command & control (C&C). We expounded on this in our report.

Security risks for audio-only social networking platforms

Here are some sample attacks that can be launched against users of audio-centric social media apps. The full details of these can be found in our full technical brief:

1. Network Traffic Interception and Wiretapping

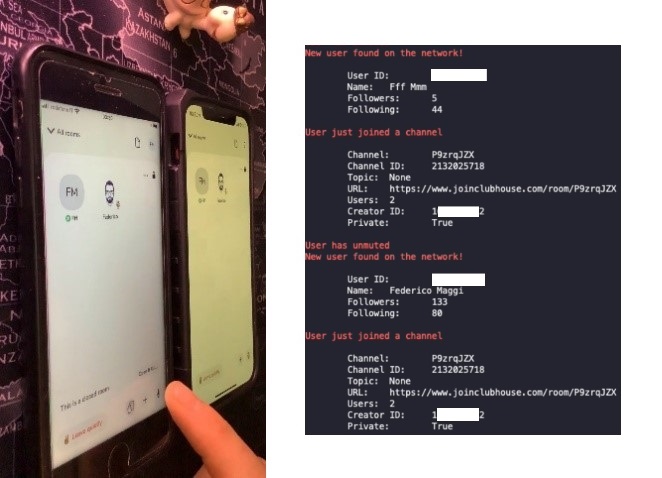

An attacker can know who is talking to whom by analyzing network traffic and looking for RTC-related packets. The following screenshots, taken from our demonstration performed using the ClubHouse app, show how an attacker can automate this procedure and intercept the RTC control packets to obtain sensitive information about a private chat created with two users in it.

According to the response given by ClubHouse to the Stanford Internet Observatory, ClubHouse will implement proper encryption to prevent this and related attacks.

2. User Impersonation and Deepfake Voice

A malicious user could impersonate a public persona and, by cloning their voice, make them say things they never would, with consequences on their reputation. An attacker could also clone the voice and create a fake profile of a famous trader, attract users into joining a room, and endorse a certain financial strategy.

3. Opportunistic Recording

As stated in the terms of service of most (if not all) apps, the content of most audio-only social networks is meant to be ephemeral and "for participants only." But some attackers can take recordings, clone the account, automatically follow all of the account’s contacts to make it look more authentic, join any other room, and use the sample of the speech to make the "cloned" voice say phrases that can tarnish the original speaker’s reputation and perhaps even enable fraudulent business transactions.

4. Harassment and Blackmailing

How this can actually be conducted will depend on the structure of the app and the network. For example, in some platforms, an attacker that follows their victim will get notified when that person joins a public room. Upon receiving a notification, the attacker could join that room, ask the moderator to speak, and say something or stream pre-recorded audio to blackmail the victim. We verified that all of this can be easily scripted to run automatically. Fortunately, most apps also have features to block and report abusive users.

5. Underground Services

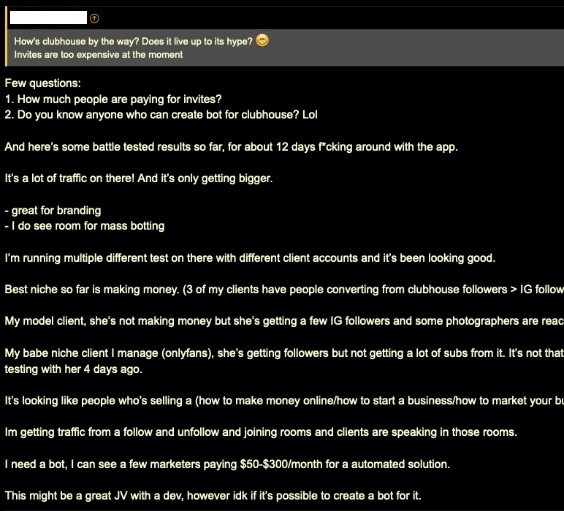

Right after its launch, we found active discussions about ClubHouse on the surface Web. Some users are already discussing purchasing followers, with some alleged developers promising to reverse engineer the API to create a bot in exchange for an invite — something that we also verified to be feasible.

6. Audio Covert Channels

Using these platforms, threat actors can create covert channels for C&C or hiding or transmitting information using steganography. If audio-centric social networks continue to increase, attackers may begin to consider them a reliable alternative channel: for example, an attacker can create multiple rooms and have bots joining them to dispatch commands, and no trace will be left (except in the encrypted recordings, if any).

Security recommendations

To ensure the secure use of audio-only apps, we recommend these best practices for users of audio-only social networks:

- Join public rooms and speak as if in public. Users should only say things that they are comfortable sharing with the public, as there is a possibility that someone in the virtual room is recording (even if recording without written consent is against the Terms of Service of most, if not all, of these apps).

- Do not trust someone by their name alone. These apps currently have no account-verification processes implemented; always double-check that the bio, username, and linked social media contacts are authentic.

- Only grant the necessary permissions and share the needed data. For example, if users don't want the apps to collect all data from their address book, they can deny the permission requested.

Based on our technical analysis of the apps and communication protocols, we recommend that current and future service providers consider implementing the following features unless they have done so already:

- Do not store secrets (such as credentials and API keys) in the app. We have found cases of apps embedding credentials in plain text right in the app manifest, which would allow any malicious actor to impersonate them on third-party services.

- Offer encrypted private calls. While there are certainly some trade-offs between performance and encryption, state-of-the-art messaging apps support encrypted group conversations; their use case is different, but we believe that future audio-only social networks should offer a privacy level on par with their text-based equivalent. For example, Secure Realtime Transport Protocol (SRTP) should be used instead of RTP.

- User account verification. None of the audio-only social networks currently support verified accounts like Twitter, Facebook or Instagram do, and we have already seen fake accounts appearing on some of them. While waiting for account-verification features, we recommend users to manually check whether the account they're interacting with is genuine (e.g., check the number of followers or connected social network accounts).

- Real-time content analysis. All of the content-moderation challenges that traditional social networks face are harder on audio- or video-only social networks because it's intrinsically harder to analyze audio (or video) than text (i.e., speech-to-text takes resources). On the one hand, there's a clear privacy challenge that arises if these services implement content inspection (because it means that they have a way to tap into the audio streams). However, content inspection offers some benefits, for instance, in prioritizing incidents.

The full version of our report can be found in our technical brief.