Key takeaways

- The growing dependence on AI has caused a rapid emergence of AI-based tools. Unfortunately, these applications have also become vectors for malicious actions, as in this case with Kuse.ai.

- Ordinarily, Kuse is a trusted workplace platform. However, threat actors are always finding new methods of social engineering.

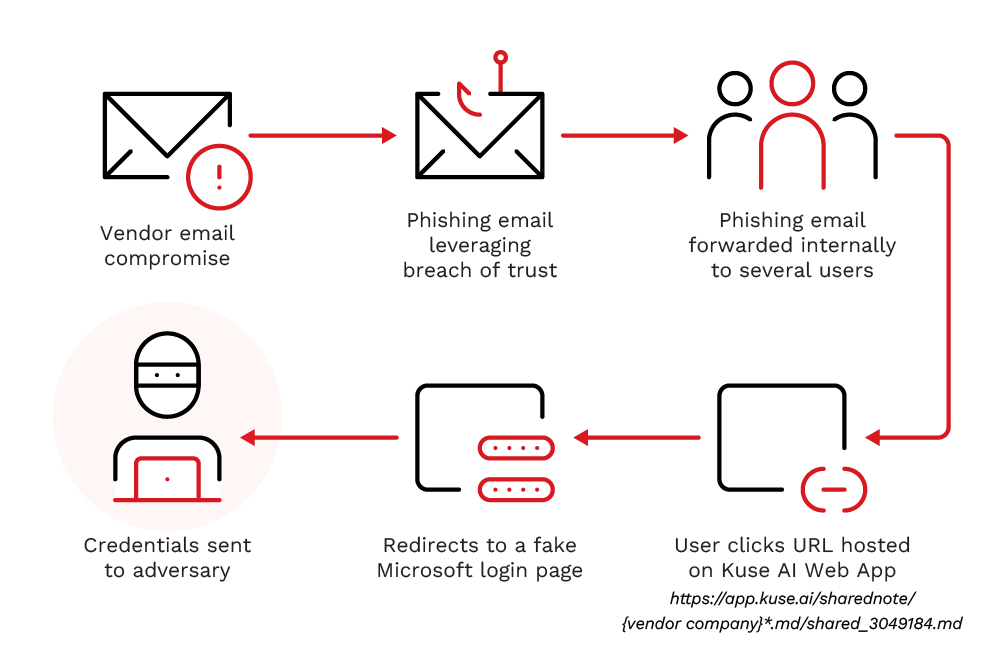

- In this case, threat actors executed a phishing attack that utilised a fake URL and image manipulation.

- Organisations therefore have to strengthen their security training and keep reminding employees that an application's good reputation does not guarantee the trustworthiness of its content.

As AI increases its role in work and daily life, AI apps are also increasing in number. Along with this emergence are expanding attack vectors that threat actors are actively exploring. AI is reshaping the cybersecurity landscape, introducing both unprecedented opportunities and complex risks.

On 9/April/2026, the TrendAI Managed Services Team encountered a phishing attack that revealed another vulnerability that enabled attackers to store phishing chains, breach trust, and eventually expose credentials. In this case, attackers abused the storage and sharing features of Kuse, a free AI web app.

This breach involved a Supply Chain Attack, particularly a Vendor Email Compromise (VEC), wherein a compromised mailbox from a trusted vendor was used to send a specifically crafted phishing email that leveraged the existing relationship level between the two organisations. Because of this, some IOCs are partly redacted in this article due to the usage of specific organisation names.

Due to the breach of trust, a malicious email was forwarded to relevant users for processing, leading to a user clicking on a phishing link and providing credentials to a fake login page.

Kuse.ai

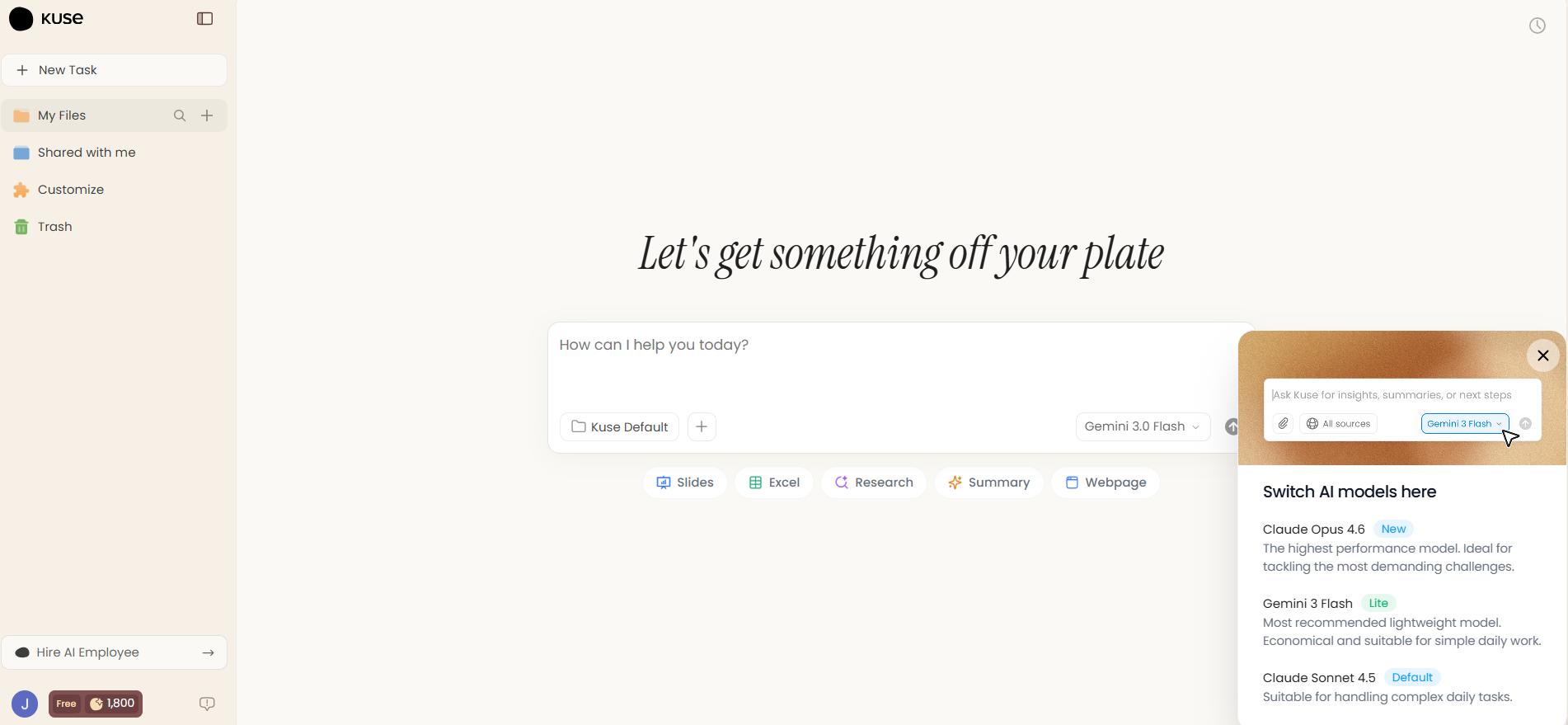

According to its website, Kuse.ai is an agentic AI co-worker that uses one’s work context to improve decision-making and execute end-to-end, multi-step workflows.

Upon logging in, users can upload documents or create a markdown note in their folders. They can then ask an AI chatbot to perform tasks using the uploaded files.

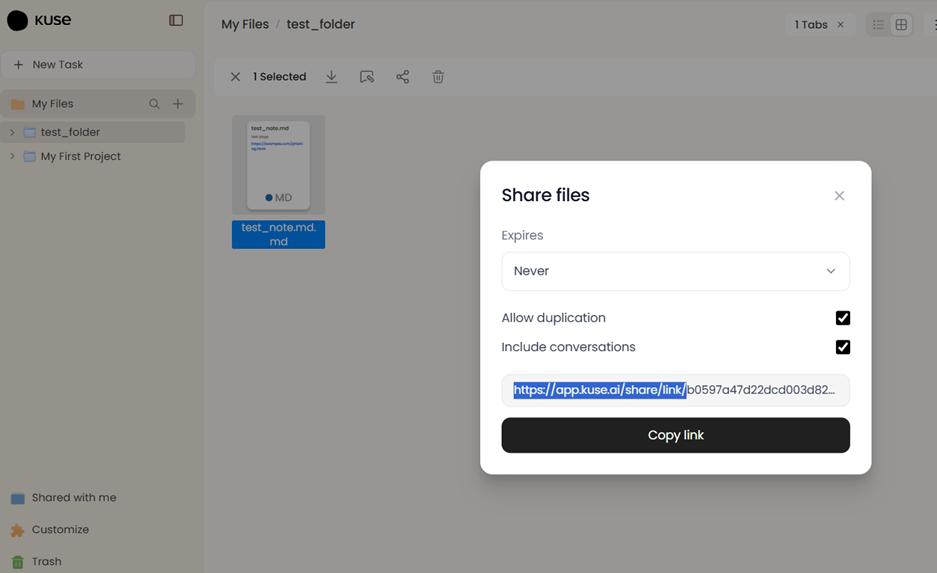

Each file on the account can be shared via a share button, which generates a link hosted under Kuse’s domain, app[.]kuse[.]ai.

Attackers abused this mechanism to host a fake blurred document that contained a link to a fake login page.

URL Analysis

- hxxps://app[.]kuse[.]ai/sharednote/{vendor company}%20S.L..md/shared_3049184.md

The URL used the legitimate domain app[.]kuse[.]ai and contained spaces, commas, and full stops. Moreover, the URL mimicked a legitimate document using the compromised vendor’s company name. These links were presumably put in emails sent from mailboxes belonging to the compromised vendor, aimed at the target organisation. This tactic was meant to confuse users and automated scanners.

Because the Markdown file extension (.md) is less commonly used in phishing attempts than document (e.g., .pdf, .docx) and webpage (e.g., .html, .aspx) file extensions, it can bypass filter signatures and heuristic rules that focus on more typical malicious file extensions.

User Experience and Redirection

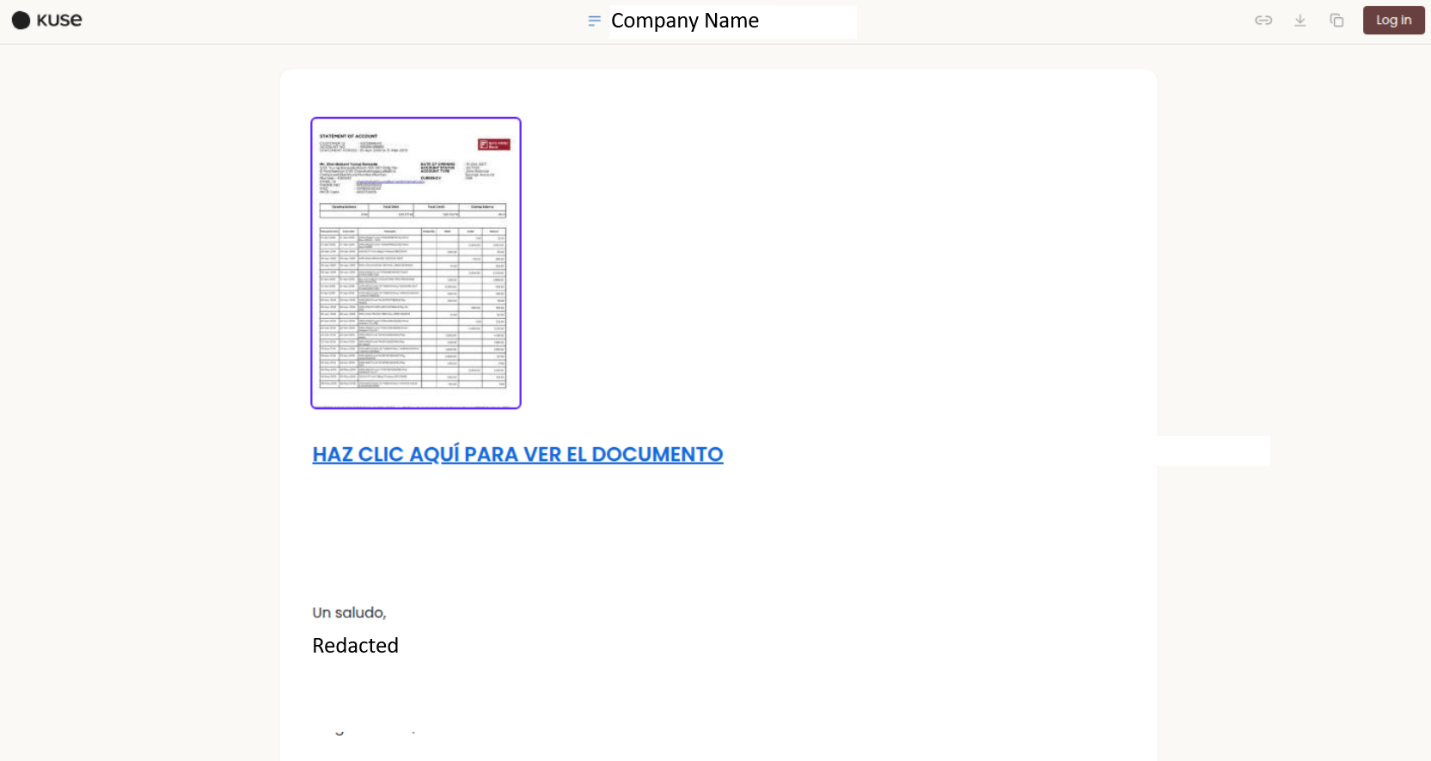

After clicking the phishing URL, the user was redirected to the legitimate AI workspace app[.]kuse[.]ai. The user then opened the .md page, which was displayed as a blurred document preview. This falsified document preview lured the user into clicking the malicious link below it, thinking it would reveal its full content. The link stated in Spanish “HAZ CLIC AQUÍ PARA VER EL DOCUMENTO”, which translates into English as “CLICK HERE TO VIEW THE DOCUMENT”.

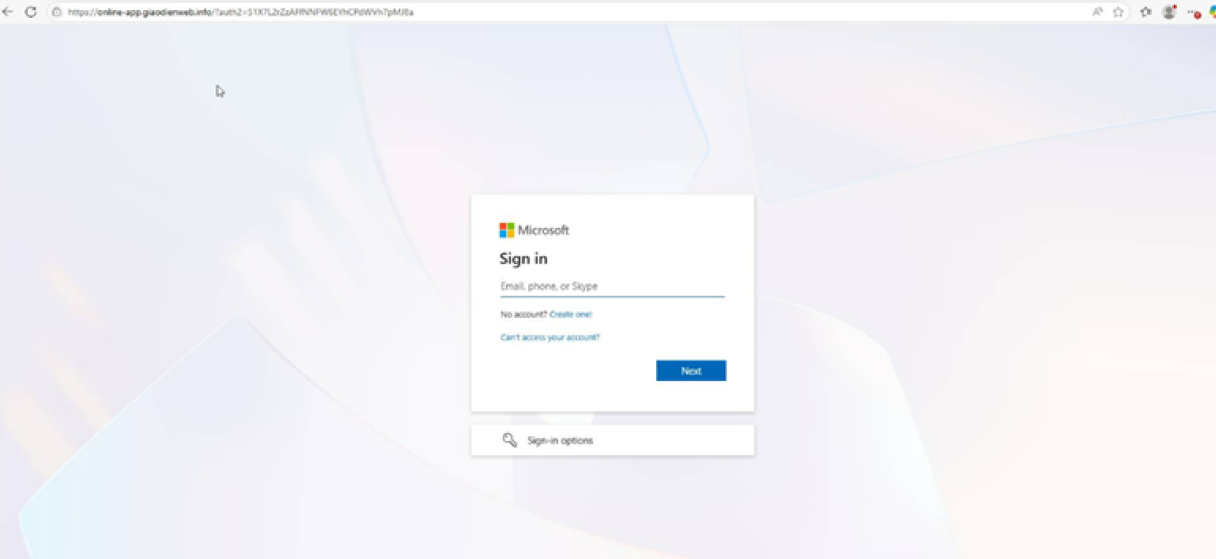

Instead, the hyperlink redirected the user to a fake Microsoft login page to collect user credentials:

- hxxps://onlineapp[.]ooraikaoo[.]info/?auth2=8rf22euu-2nxkebabDjjILlzldhQq2Pz

Conclusion

Threat actors are always looking for new vectors to exploit the inherent trust placed in legitimate platforms. They abuse the storage and sharing capabilities of free services, as well as the growing interest in AI-powered web applications. Using the Markdown (.md) file extension as the delivery format, combined with a VEC to establish trust at the point of delivery, demonstrates a multi-layered social engineering approach designed to evade both automated defences and human scrutiny, which in turn highlights the need for layered protection and heightened user awareness.

As AI tools become more embedded in business workflows, their sharing and collaboration features present new surfaces for misuse. Similar to how threat actors have previously leveraged file-sharing services, such as Dracoon, to host intermediary phishing documents and GitHub's trusted reputation to distribute malware, the abuse of Kuse.ai follows an established pattern: weaponising platform legitimacy to circumvent security controls. Therefore, organisations must recognise that even highly reputable platforms can host untrustworthy content.

Recommendations

- Conduct regular user awareness training. Training should go beyond generic phishing awareness and include real-world scenarios involving AI platform abuse, VEC, and blurred document lures. Users should be educated on recognising social engineering cues regardless of the hosting platform's reputation.

- Verify links beyond the domain. A legitimate domain (e.g., app[.]kuse[.]ai) does not guarantee safe content. Users should scrutinise the full URL path, especially when documents are shared unexpectedly or contain urgency-driven calls to action.

- Treat VEC as a persistent threat. Emails from trusted vendors should not be exempt from security scrutiny. Organisations should implement policies that require secondary verification (e.g., a phone call or separate messaging channel) before acting on requests that involve clicking links or providing credentials, particularly when the email context is unusual.

- Enforce Multi-Factor Authentication (MFA) with phishing-resistant methods. Traditional MFA can be bypassed by reverse proxy toolkits. Organisations should adopt phishing-resistant authentication methods, such as FIDO2/WebAuthn hardware keys, to mitigate credential harvesting from fake login pages.

- Monitor and restrict AI platform sharing features. Security teams should assess which AI tools are in use within the organisation and evaluate whether their sharing and external link generation features introduce unmanaged risks. Where possible, outbound access to AI platform sharing URLs that are not business-critical should be restricted or monitored.

- Implement advanced email and URL filtering. Deploy email security solutions capable of inspecting URLs at time-of-click rather than only-at-delivery. URL sandboxing and real-time reputation checks can help detect phishing pages hosted on otherwise trusted domains.

TrendAI Vision One™ Threat Intelligence Hub

TrendAI Vision One™ Threat Intelligence Hub provides the latest insights on emerging threats and threat actors, exclusive strategic reports from TrendAI™ Research, and TrendAI Vision One™ Threat Intelligence Feed in the TrendAI Vision One™ platform.

Emerging Threats: Kuse AI Web App Abused to Host Phishing Document

TrendAI Vision One™ Intelligence Reports (IOC Sweeping)

Indicators of Compromise (IoCs)

- 91.92.41[.]64

- hxxps://onlineapp[.]ooraikaoo[.]info/?auth2=8rf22euu-2nxkebabDjjILlzldhQq2Pz

- hxxps://app[.]kuse[.]ai/sharednote/<victimcompany>%20S.L..md/shared_3049184.md