Artificial Intelligence (AI)

The Real Risk of Vibecoding

This blog examines how AI‑driven vibecoding accelerates software development while increasing security risk by outpacing traditional review and ownership. It explains why security needs to move earlier and be integrated into modern development workflows.

That’s the promise of vibecoding, describing what you want in plain language and letting AI generate the code for you. For many teams, it feels like magic. Development moves faster. Prototypes become products almost overnight. Barriers to building software are lower than they’ve ever been. By dramatically lowering the cost of producing code, AI increases the volume and speed of software change, faster than most teams can review, govern, or fully understand it.

But here’s the uncomfortable truth:

Vibecoding doesn’t just accelerate development, it accelerates risk.

Not because AI is “bad at coding” or is the villain here, but because vibecoding changes how software gets built, reviewed, and owned. By vibecoding, I mean workflows where developers, or AI agents acting on their behalf, generate meaningful, production‑bound code from natural‑language prompts, often with limited line‑by‑line scrutiny. This is different from simple autocomplete or standard IDE assistance. And that shift has real security consequences.

Speed without understanding

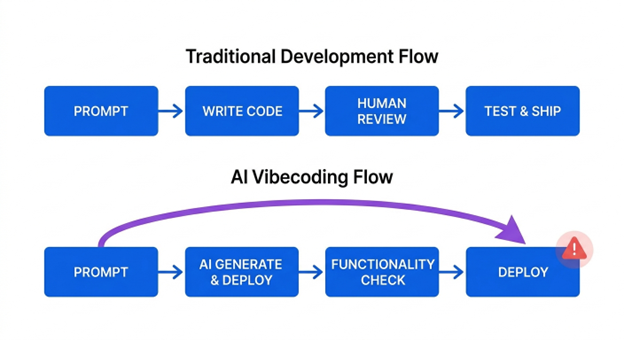

Traditional development has built-in friction. You write code. You review it. Someone else reviews it. You test it. You argue about it. Only then does it ship.

Vibecoding collapses that process.

When code is generated from prompts, developers often focus on one question: Does it work?

- Not: Is it safe?

- Not: Do I fully understand what this code is doing?

In many cases, the person shipping the application didn’t write the code line by line and couldn’t easily explain it if asked. That doesn’t make them careless, it makes them human. Vibecoding doesn’t remove existing controls, it pushes more change through them, faster, exposing where review, policy, and approvals are late, manual, or disconnected.

Vibecoding rewards momentum, not scrutiny.

The result is production code that works as intended and passes basic tests, but hasn't been deeply reviewed, threat-modelled, or validated for security. Functionality becomes the finish line. Security becomes “something we’ll handle later.”

Every prompt ships more than you expect.

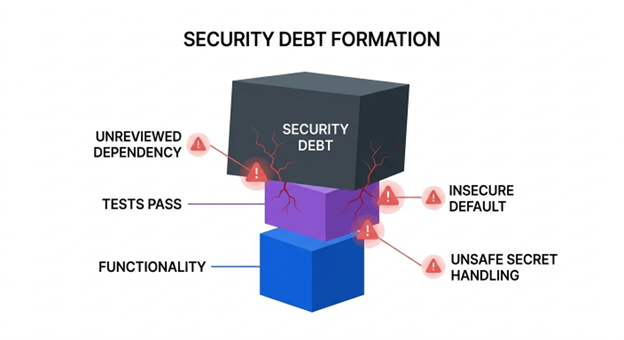

AI doesn’t generate code in isolation. A prompt rarely produces just business logic, it often brings along framework choices, helper packages, and implementation shortcuts that may never be consciously reviewed.

In practice, vibecoding can introduce:

- Unintended dependencies: A prompt for OAuth login may pull in a helper library or starter template the developer never explicitly chose.

- Risky defaults: A generated service may inherit permissive logging, broad network bindings, or relaxed validation that are fine for demos but unsafe in production.

- Weak secret handling: Examples may normalise placeholder secrets, test tokens, or logging sensitive values.

- Happy‑path logic: The code works for valid users but omits authorisation edge cases, abuse limits, or failure handling.

And because the change feels small, “just a helper function” (a small piece of supporting code) or “just a quick endpoint” (a new API added in a hurry), it’s easy to underestimate the risk. This is how security debt forms: not from one catastrophic mistake, but from hundreds of fast, reasonable decisions made under pressure to ship.

The ownership problem no one talks about

One of the biggest risks in vibe coding isn’t that nobody owns the code, it’s that ownership becomes fragmented.

The committer may be clear, but intent, generation path, dependency rationale, and review independence often are not. Responsibility is spread across the prompt author, the AI agent, the reviewer, and the service owner.

The original developer may have moved on. The code may not look familiar to anyone. The logic may not follow the team’s usual patterns. Suddenly, fixing a “small” issue turns into a scavenger hunt:

- Who generated this code?

- Why was this library added?

- Is this behaviour intentional?

- Can we safely change it?

The time lost figuring that out often dwarfs the time it would have taken to prevent the issue in the first place.

Even when ownership is identifiable, review independence can still break down. Teams increasingly rely on the same AI system to both generate and validate changes, creating the appearance of review without true separation of duties.

Vibecoding doesn’t break security controls — it stress-tests them.

By lowering the cost of producing code, AI dramatically increases the volume and speed of software change. When review, ownership, policy enforcement, and accountability don’t scale at the same pace, teams lose control over what is being shipped.

The real risk isn’t just insecure code. It’s uncontrolled software change.

None of these risks are new. Developers have always reused libraries, inherited defaults, and shipped code under pressure. What AI changes is the scale, speed, and perceived effort of introducing those risks. When generating working code feels nearly free, teams make more changes, more often, with less scrutiny and existing controls are stress-tested in ways they weren’t designed for.

In a vibecoding world, that’s already too late.

By the time an issue is discovered, the code is in production, the developer context is gone, and the fix is disruptive. That’s when security feels like a blocker instead of a guardrail.

What actually works in a vibecoding world

If vibecoding is here to stay, and it is, security has to adapt to how software is actually built today. That means four practical shifts:

- Catch issues earlier, not louder: Early signal beats late alerts.

- Make guardrails automatic: Security can’t depend on developers remembering every rule while prompting.

- Focus on shared context: Developers and Security need to see the same issue without handoffs.

- Optimize for flow, not friction: Controls that fit existing workflows get adopted.

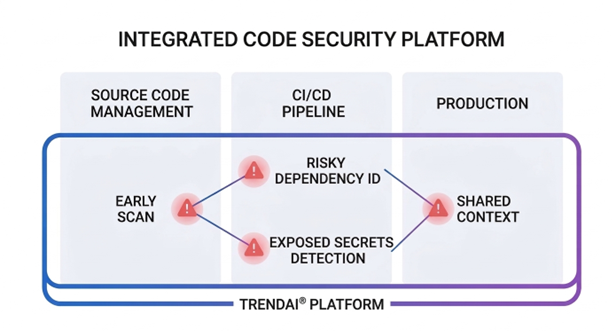

Where platforms start to matter

This is where platforms, not point tools, become important. When code security is integrated into the same place teams already manage risk, and tied directly into CI/CD workflows, it becomes part of how software is built.

These workflow changes are exactly why integrated code security platforms are becoming more important. TrendAI’s view is that security must be embedded into development workflows so review, policy, and ownership scale alongside AI‑generated change.

The key isn’t the feature list. It’s the timing. Security that shows up early feels like guidance. Security that shows up late feels like punishment.

Vibecoding isn’t reckless, ignoring its risks is

Vibecoding is powerful. It’s creative. It’s changing who gets to build software and how fast ideas become reality. But speed without guardrails doesn’t just move faster, it moves risk faster.

The organisations that succeed won’t be the ones that ban it. They’ll be the ones that recognise its risks early and design for them.

Because in the end, the real risk of vibe coding isn’t AI writing insecure code. It’s humans shipping code they never had a chance to secure.